TL;DR

| AI governance tools inventory AI systems, enforce policies, and automate audit evidence for frameworks like ISO 42001 and the EU AI Act. |

| Tool selection depends on governance ownership, regulatory scope, and whether you’re managing vendor AI adoption or building internal models. |

| By 2026, AI governance will no longer be optional for many companies: the EU AI Act’s main obligations will begin to apply, and ISO/IEC 42001 certification will increasingly be used to demonstrate maturity to enterprise buyers. |

AI risk isn’t hiding in edge cases anymore. It’s showing up in everyday employee workflows, vendor relationships, and compliance audits. A recent survey suggests 48% of employees have uploaded sensitive company data to public AI tools, while IBM reports that organizations with high shadow AI exposure see an average of ~$670,000 higher breach costs, and Stanford’s AI Index shows that AI-related incidents jumped 56.4% in a single year.

The core challenge is scale: governance processes built for a handful of major projects each year can’t keep up with continuous AI adoption and agent-driven workflows. Without structured oversight, shadow AI, vendor sprawl, and stalled pilots become the default, leaving teams without a defensible trail of decisions, controls, and accountability.

That’s why boards and shareholders are pushing for explicit AI oversight, while regulators and standards bodies are raising expectations through the EU AI Act, ISO/IEC 42001, and the NIST AI RMF. In this guide, we’ll explain what AI governance tools do, what to look for, and how to choose the right one based on your scope, ownership model, and compliance needs.

What are AI governance tools?

AI governance tools are platforms that help enterprises establish regulatory and compliance oversight of AI platforms across their lifecycle. They uphold policies, monitor behavior, and inventory AI systems to mitigate risks associated with adopting, building, and deploying AI.

They’re increasingly used to support regulatory and governance programs tied to frameworks and standards such as the EU AI Act, ISO/IEC 42001, and the NIST AI RMF.

GenAI adoption and agentic AI are accelerating demand for governance platforms. When employees can spin up ChatGPT integrations in minutes or deploy autonomous agents that take actions across SaaS tools, governance can’t rely on annual audits.

There’s a real speed mismatch: AI use evolves in days (sometimes hours), while human review processes were designed around slower, fewer launches. You need continuous visibility into what’s running, where data flows, and whether systems stay inside guardrails.

What’s the difference between AI governance, AI risk management, and AI compliance?

AI governance is about establishing policies, a decision-making framework, and designing controls for oversight. AI risk management is a subset of that. It identifies risks and establishes mitigating controls for threats such as hallucinations, biases, and prompt exploits.

Then, AI compliance is all about proving that you meet regulatory obligations and external assurance expectations, such as the EU AI ACT, the NIST AI Risk Management Framework, and ISO/IEC 42001, by implementing the suggested controls and capturing a defensible evidence trail.

That audit trail requires regular monitoring, documentation of AI decision logic, and the production of reports for regulators and internal stakeholders. And when you rely on human review, it has to be meaningful. If reviewers don’t get the right diagnostic context (what the system did, why, and what it touched), the human simply becomes a rubber stamp.

How did we choose the Top 6 AI governance tools?

There are many tools and point solutions on the market. We have compiled six commonly evaluated options that collectively cover the most common governance use cases across compliance, risk, and operational oversight.

| Platform | Primary focus | Best fit | Biggest gaps / trade-offs |

| Sprinto | Unified GRC and AI compliance | SaaS/IT teams that want AI governance alongside SOC 2 / ISO / GDPR programs | Less specialized for deep model/LLM observability |

| Credo AI | Governance workflows and framework mapping | Teams building an audit-ready AI governance program (policies, controls, evidence) | Not a deep runtime observability/ LLM monitoring layer; requires clear ownership and follow-through |

| Relyance AI | Data lineage and privacy monitoring | Orgs that need visibility into how data moves through AI systems and vendor integrations | Can be engineering-heavy to implement; may still need separate model performance monitoring |

| TrustCloud | Extends GRC into AI | Companies with established SOC 2 / ISO programs that want AI governance inside a broader compliance engine | Limited AI-specific technical monitoring and LLM governance depth |

| OneTrust AI Governance | Enterprise-scale inventory and risk workflows | Large enterprises, especially those already using OneTrust for privacy/consent operations | Complexity and rollout effort can be significant |

| Fiddler AI | Model and LLM observability | ML/AI teams running models/ GenAI apps in production who need drift/safety monitoring | Not a full governance system for intake, portfolio inventory, and audit workflows |

1. Sprinto

Sprinto is an Autonomous Trust Platform that centralizes all trust obligations, including regulations, internal policies, contracts, and vendor requirements, and automates the work required to stay trustworthy across compliance, vendor risk, access, privacy, risk management, and AI governance.

For AI governance, Sprinto helps teams maintain visibility into how AI is used, which data it touches, and which vendors/subprocessors are involved, while keeping supporting controls and evidence up to date. It helps teams share their governance posture with customers and prospects, including in-scope AI systems, deployment context, guardrails, data-handling practices, and applicable frameworks, with visibility controls for what’s public vs. internal.

Key features

- ISO 42001 compliance automation: Over 140 pre-mapped controls with built-in workflows tracking AI lifecycle from design through retirement

- AI Trust Center: Public-facing portal showcasing AI models, guardrails, compliance frameworks, certificates, and data privacy processes

- Shadow AI discovery: SSO-based discovery to identify unauthorized AI tool usage across the organization

- AI vendor risk management: Continuous monitoring and structured due diligence for AI tools and APIs with real-time breach tracking

Pros:

- Unified GRC platform managing traditional compliance like SOC 2, ISO 27001, and AI governance frameworks like ISO 42001 in one system

- AI Trust Center accelerates enterprise sales by proactively sharing governance posture

- Continuous monitoring through 300+ integrations with cloud, identity, and DevOps tools

Cons:

- Less specialized in AI-specific technical monitoring compared to platforms like Fiddler or Credo

Buyer consideration:

Sprinto is best suited for mid-market SaaS and tech companies scaling compliance programs from SOC 2 to multi-framework environments. If you’re already managing or pursuing ISO 27001 or SOC 2, adding ISO 42001 through Sprinto keeps everything under one roof. The AI Trust Center is particularly valuable for companies whose enterprise customers demand transparency in AI governance.

2. Credo AI

Enterprises need a way to translate AI governance policies into technical controls that can be monitored. Credo closes that gap, offering a systematic way for organizations to implement consistent oversight across a sprawling set of AI systems spread across the enterprise.

Key features:

- Policy-to-code architecture: Translates governance policies into technical controls and monitoring requirements

- AI registry: Centralized repository for AI systems, models, datasets, and vendors with metadata capture

- 700+ risk scenarios library: Pre-built AI-specific risk scenarios mapped to mitigation controls from NIST, MITRE, and academic research

- Vendor portal: Third-party AI assessment workflows with standardized questionnaires and risk scoring

Pros:

- Strong regulatory framework alignment (NIST AI RMF, ISO 42001, EU AI Act pre-mapped)

- Pulls evidence from MLOps tools (model monitoring, training data, bias metrics)

- Advisory services available to assess governance maturity and embed best practices

Cons:

- Policy-focused, not technical observability

- Implementation requires a mature governance culture, and effectiveness depends on organizational discipline

Buyer considerations:

Credo AI is particularly strong for regulated industries (financial services, healthcare, government) with European operations. If you’re pursuing ISO 42001 certification or need to demonstrate EU AI Act compliance to enterprise customers, Credo’s framework mapping saves manual work.

3. Relyance AI

Relyance AI sits at the intersection of AI governance and data governance. It focuses less on what models exist and more on what happens to data, where it comes from, how it moves, and how it’s used in AI systems.

This is often what auditors and privacy teams want most: data lineage that shows you didn’t train on restricted data, and that you understand cross-border transfers, subprocessors, and downstream exposure.

Key features:

- Data journey mapping: End-to-end tracking of data flows from source code through models with a visual representation

- Real-time compliance monitoring: Continuous detection of data drift, bias violations, and compliance gaps

- Code-to-cloud integration: Embeds governance gates into CI/CD pipelines and development workflows

- Third-party AI risk management: Monitors vendor AI integrations and cross-border data transfers

Pros:

- Strong for organizations with complex data pipelines and internal ML teams

- Integrates with developer workflows (Git, CI/CD, cloud platforms)

Cons:

- Can be more builder-focused than adopter-focused, and need technical data engineering capabilities

Buyer considerations:

Relyance makes sense if you have both internal AI development teams and vendor AI to govern. Engineering-first organizations with cloud-native stacks might see the most value.

4. TrustCloud

TrustCloud positions itself as a bridge between traditional GRC and AI governance. Instead of operating as a standalone AI governance product, it adds AI governance capabilities to a broader compliance and control-monitoring system.

Key features:

- AI governance lifecycle: Assess first-party and third-party AI risks, manage policies, and share AI posture with enterprise customers

- Evidence collection: 100+ integrations with cloud platforms, DevOps tools, and security apps for automated evidence gathering

Pros:

- Works well for organizations with existing GRC infrastructure looking to add AI governance

- The evidence reuse approach reduces some documentation redundancy across frameworks

Cons:

- Limited AI-specific technical monitoring, more focused on governance documentation rather than real-time model observability

- Can be complex for organizations that don’t have mature GRC programs already in place

Buyer considerations:

The platform works best for companies with established compliance programs that need to extend into AI governance.

5. OneTrust AI Governance

The platform automates the discovery of AI models, datasets, vendors, and agents while mapping relationships to ensure traceability. From there, it operationalizes risk management frameworks by mapping systems to evolving standards such as the EU AI Act, ISO 42001, and NIST AI RMF, through out-of-the-box assessments and role-based workflows designed to scale.

Key features:

- AI governance agents: Automate third-party risk assessments and privacy impact reviews from intake through findings in minutes vs. weeks

- Continuous AI governance synchronization: Native integration with Databricks Unity Catalog for real-time AI project visibility

- Multi-risk platform: Unified system for privacy, cybersecurity risk, third-party risk, AI risk, and data governance

- Regulatory compliance templates: Pre-built assessments for NIST AI RMF, ISO 42001, EU AI Act with in-app regulatory updates

Pros:

- You can manage risk, compliance, data governance, and AI adoption from one platform

- AI Agents deliver measurable time savings on vendor assessments and privacy reviews

- Integration ecosystem with hundreds of connectors across ServiceNow, Jira, cloud platforms, identity systems

Cons:

- High complexity with a steep learning curve

- Implementation is heavy and often requires external consulting support

Buyer considerations:

OneTrust makes sense for large enterprises with distributed teams managing privacy, risk, compliance, and AI governance across departments. If you already use OneTrust for privacy or consent management, adding AI governance leverages existing infrastructure.

Are your AI systems mapped, monitored, and documented?

👉 See how Sprinto prepares you →

6. Fiddler AI

Fiddler is primarily an AI observability and monitoring platform. It’s built for teams that need ongoing visibility into how deployed ML models and GenAI/agentic applications behave in production. You can use it to gain visibility into performance degradation, drift, unsafe outputs, and the other operational signals you need for incident response and audits.

Key features:

- Production monitoring and alerting: Track performance, drift, and runtime behavior so issues show up as incidents, not surprises.

- LLM/agent risk coverage: Monitor for classes of risks that matter in GenAI rollouts (e.g., toxic outputs, potential sensitive-data leakage, safety issues) and support investigation when something goes wrong.

- Audit evidence and traceability: Helps generate documentation/audit trails around model behavior and decisions for governance or review workflows.

Pros:

- Strong fit for engineering-led organizations that already have AI systems in production and need diagnostics and monitoring rather than policy paperwork.

Cons:

- It doesn’t replace portfolio-level AI inventory, policy workflows, or regulatory mapping on its own (it complements those).

- Can be harder to adopt for teams new to ML ops/monitoring (learning curve is a real consideration).

Buyer consideration:

Fiddler fits best in the monitoring/observability layer of an AI governance stack. You can use it to produce the runtime signals and evidence, and pair it with your compliance tooling for inventory, approvals, and framework mapping.

Ultimately, you need to pick a tool that increases the speed of safe shipping, not just the number of documents.

Core capabilities of AI governance tools

AI governance tools help make an enterprise’s AI use more transparent and accountable. They achieve this by helping to uncover how an organisation is using AI in the first place, evaluating which systems are high risk, ensuring policies are adhered to before any new AI is deployed, constantly scanning for any biases or safety risks, and keeping records that show the organization complies with all the right regulators and customers.

Let’s take a deeper look at these features:

- AI Inventory: You can only govern an area if you know what’s going on in it. So these tools help track down where AI is being used, including in models built in-house, in SaaS services that contain AI, and in shadow AI projects that aren’t officially sanctioned anywhere.

- Risk assessment and scoring: AI systems vary in impact and exposure. These tools assess each system and assign a risk tier, so teams know which use cases need stricter controls and continuous monitoring, and which can be handled with lighter oversight.

- Approval workflows and policy enforcement: Teams need clear approval paths that don’t block innovation. Automated workflows route AI use cases through appropriate review gates based on risk level, reducing deployment friction while maintaining governance.

- Evidence collection and audit preparation: Auditors want proof that controls are working. Continuous automated collection keeps evidence audit-ready year-round, rather than scrambling to gather documentation weeks before certification deadlines.

- Continuous monitoring for GenAI and LLMs: AI production models can drift, hallucinate, and leak data. These problems are detected by real-time monitoring and prevented before they cause damage to users or breach compliance regulations.

- Third-party AI risk management: You’re responsible for managing the risks introduced by the AI that your suppliers use, even if you don’t have control over it. AI governance tools help monitor these subprocessors and trigger alerts and mitigation workflows if breaches occur in the chain.

- Framework mapping and evidence reuse: The same control often satisfies multiple framework requirements. Pre-built mappings eliminate documenting the same evidence separately for NIST AI RMF, ISO 42001, and the EU AI Act.

- Reporting and executive dashboards: Auto-generated dashboards and documentation save compliance teams from manual report assembly every quarter, all while offering the board visibility into AI risks.

- Incident response and escalation: When AI works with bias or leaks data, the right teams need to know immediately. Automated routing ensures incidents reach the appropriate stakeholders and include escalation paths if unresolved.

- Operational speed: A robust program can handle a growing volume of AI use cases without teams going rogue, because risk decisions are repeatable and the governance team can respond at the speed the business needs.

It should accelerate safe deployment.

👉 Explore how →

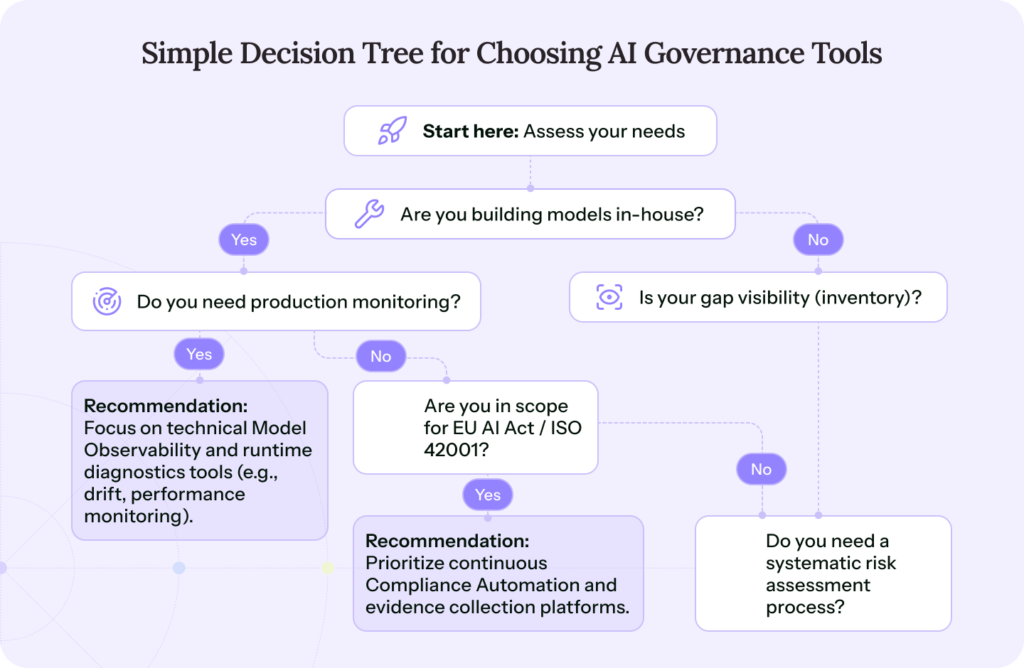

How to evaluate and choose AI governance tools

The right AI governance tool depends on what you’re trying to accomplish. Some organizations need comprehensive AI discovery because shadow AI is their primary risk. Others already know what AI they’re using but need structured approval workflows to prevent ungoverned deployments.

Still, some are focused on proving compliance to enterprise customers and need a public-facing AI Trust Center. The tool that works for one doesn’t necessarily work for another.

1. Define your AI risk profile

Start by defining what AI risk means in your environment. If you’re primarily adopting vendor AI (e.g., Slack, Salesforce, ChatGPT Enterprise), you need robust third-party risk assessment and vendor monitoring.

If you’re building internal models, you need lineage tracking, bias monitoring, and model governance. If you’re managing both, you need a platform that handles organizational policy and technical observability without forcing you into separate systems.

2. Clarify governance ownership

Who owns AI governance in your organization matters as much as the tool itself. If security leads governance, you’ll prioritize risk scoring and incident response workflows. But if compliance leads, you’ll emphasize framework mapping and audit evidence automation.

Whatever the org chart looks like, the social dynamic matters. Teams doing responsible AI well avoid ‘gotcha’ governance. If you’re perceived as the ethics police, people stop bringing you projects early, which is how shadow AI grows.

3. Determine what’s in scope

You need to understand your environment and AI use case to select a specific AI governance tool for that surface.

Are you governing internal models only? Internal plus SaaS AI tools? Third-party vendor AI embedded in your supply chain? Shadow AI usage you haven’t discovered yet?

Your scope determines which discovery capabilities matter because SSO-based discovery catches approved tools. Network monitoring catches shadow AI. API integrations pull data from cloud platforms where teams spin up ML experiments.

4. Assess integration requirements

Integration requirements shape tool selection more than feature lists. For example, if your data science team uses Databricks or AWS SageMaker, native integration with those platforms automates evidence collection that would otherwise require manual exports.

Also, ask where governance fits into the delivery process. Practitioners consistently recommend involvement from concept through prototype and production. This way, you won’t be meeting governance requirements for the first time during launch week and trying to unpick decisions under a deadline.

5. Match evidence needs to regulatory scope

Evidence needs vary by regulatory scope and audit frequency. For example, annual SOC 2 audits might still work with periodic evidence collection, but EU AI Act compliance for high-risk systems requires continuous monitoring with real-time evidence.

Likewise, ISO 42001 certification demands control testing records. AI governance leans heavily on continuous monitoring, and point-in-time snapshots don’t cut it.

6. Avoid common pitfalls

Organizations usually purchase AI governance tools that either exceed or fall short of their needs. In both cases, teams are either paying extra for features they can’t use or absorbing the costs of missing capabilities they need.

That happens when you purchase tools before analyzing your governance model, setting up the right risk owners, and defining policies that shape the governance structure.

Teams select tools based on feature breadth when they need depth in one area, paying for capabilities they’ll never use while missing critical functionality in their actual risk domain.

And at times, implementation gets underestimated. Vendors can quote weeks, but the final implementation takes months, stalling projects and GRC initiatives.

The fix is to define the governance structure first, buy what you need today with a clear upgrade path, and budget implementation time conservatively with executive sponsorship.

Another avoidable failure mode: governance teams lose credibility if they don’t understand how AI is actually being used. Teams doing this well encourage governance owners to stay hands-on enough with the tooling and workflows to speak the same language as engineering and product.

Why are AI governance tools becoming essential in 2026?

AI adoption accelerated faster than governance infrastructure could keep pace. Organizations that spent years carefully evaluating traditional software are deploying AI tools that integrate with employee workflows and touch sensitive information, or grapple with shadow AI that IT doesn’t know about.

Essentially, these AI systems are taking seats in hiring decisions, product development, and supply chain automation. Many are moving toward autonomous execution, where systems make every decision without human intervention.

The stakes are tangible. Governing development and adoption of AI is imperative to:

- Protect enterprise trust: Enterprise buyers now evaluate vendor AI usage as part of security questionnaires. Companies that can’t cleanly answer questions about data handling, model training, and compliance certifications lose deals or face extended sales cycles while legal teams negotiate.

- Meet regulations: The EU AI Act is phasing in requirements from 2025 to 2027, with early obligations starting in 2025 and most requirements applying from August 2026. Penalties are tiered, and the top band (for violations like prohibited practices) can reach €35 million or 7% of global turnover. ISO/IEC 42001 adds a certifiable AI management system standard. At the same time, California’s ADMT rules, NIST’s AI RMF, and emerging state-level transparency laws point to the same destination: governance must be operational, verifiable, and continuous.

- Bolster security and minimize operational risk: Shadow AI creates blind spots you can’t manage manually, and vendor AI sprawl introduces attack vectors you haven’t evaluated. Governance tools discover what AI is running across your enterprise, monitor usage against policy, and catch threats before they turn into breaches or compliance violations.

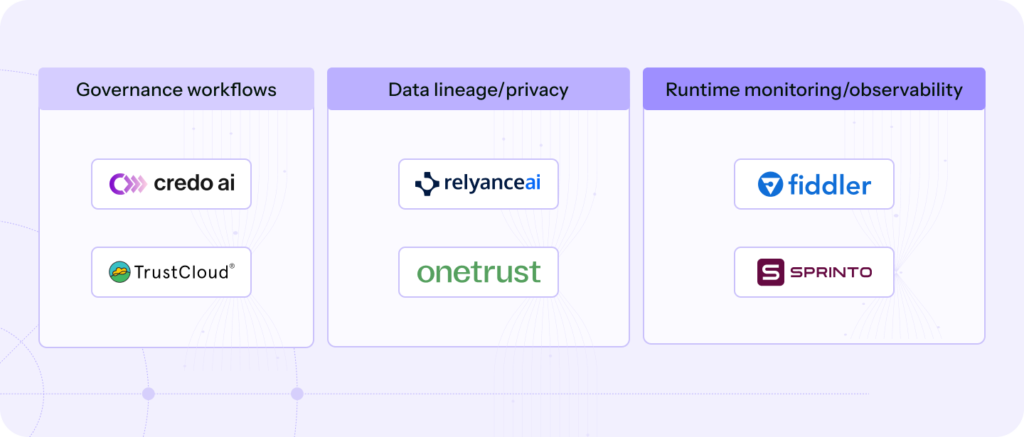

Types of AI governance tools

AI platforms like ChatGPT, Claude, and custom tools require governance at different stages of their lifecycle, spanning across development and adoption. At the development stage, you need governance tools that can monitor data and quality lineage, biases in outputs, and risk vectors in the LLM.

One nuance practitioners keep emphasizing: the right unit of governance is often the application, not the model in isolation. When you instrument the app layer, you can give reviewers better context (explanations, guidelines, safety signals) than a model-only view.

Adoption raises different concerns, as the risks shift to how AI is actually used in the business. So you need tools to inventory deployed AI and monitor its usage.

Instead of a single monolithic platform, you typically build a tech stack for governance, focusing on distinct aspects and needs. Each category solves a different governance problem.

| Category | Primary function | Best for |

| Bias detection and fairness | Measure disparate impact and discriminatory patterns | Regulated decisions like hiring, lending, and healthcare |

| Monitoring and observability | Detect drift, anomalies, and unsafe behavior in production | Continuous oversight for deployed models and GenAI |

| Compliance management | Map systems to requirements and produce audit-ready evidence | EU AI Act and internal policy programs |

| Explainability and interpretability | Make outputs and decisions understandable to reviewers | High-risk use cases needing transparency |

| Lifecycle governance | Manage model documentation, approvals, and retirement | Mature AI teams with repeatable release processes |

| Privacy management | Control sensitive data use and lawful processing | AI touching personal data and regulated datasets |

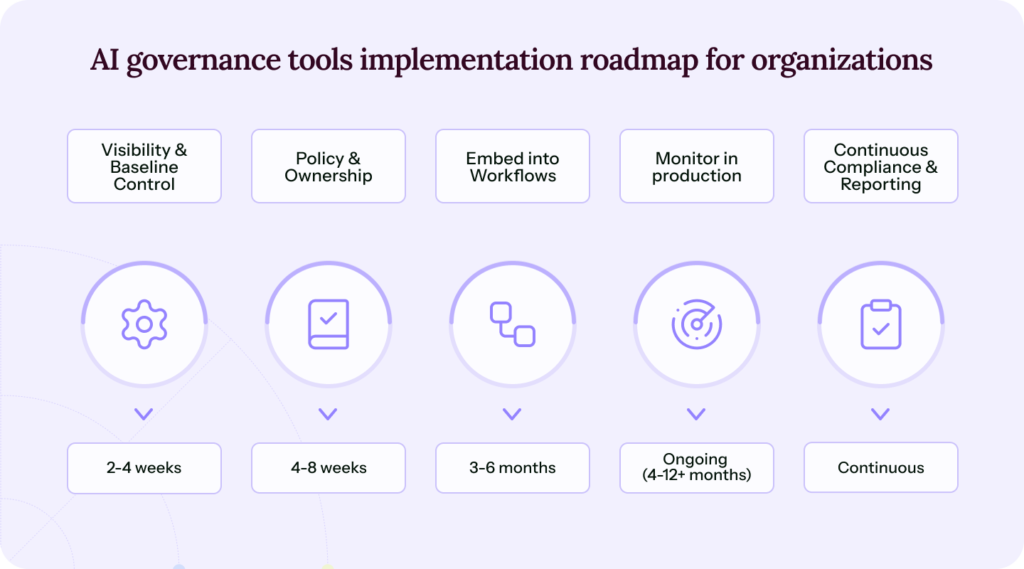

Implementation roadmap for organizations

When it comes to governance roadmaps, there is never a one-size-fits-all solution. Some organizations already carry momentum from past audits, complying with frameworks like SOC 2 and ISO 27001, having access controls and continuous monitoring ready across their environments.

Some are already governing AI adoption and are now seeking dashboards to track progress and compliance posture to AI governance frameworks. It’s about sequencing maturity as organizations move through clear phases as visibility, risk ownership, and audit pressure increase.

Phase 1. Establish visibility and baseline control

Your employees could be using multiple AI tools and systems in their workflows. AI governance starts with discovering and documenting them to establish a degree of control over which tools are used, what they access, and what they can be used for.

Tie shadow AI tools with risks and set policies to approve, block, and remediate usage.

Phase 2. Define policy and risk ownership

Your policies dictate your controls. So translate AI Governance frameworks such as the EU AI Act, ISO 42001, and others into policies that uphold the statutes. Specify the data AI development and adoption can access, which use cases require review, and who is accountable for outcomes.

Phase 3. Embed governance into delivery pipelines

Move controls closer to where work actually happens. Connect governance to model registries, CI/CD pipelines, and data workflows so reviews, approvals, and documentation happen before systems go live, not months later during an audit.

Phase 4. Monitor behavior and manage drift in production

Once AI is live, risk becomes dynamic. Models change, data shifts, and usage expands beyond original intent. Implementing continuous monitoring for drift, bias, explainability, and unsafe outputs allows teams to detect issues early and trigger remediation automatically.

Phase 5. Operationalize continuous compliance and audit readiness

AI adoption, usage, and development are all dynamic activities that change by the minute. Point-in-time reviews don’t work because the environment completely changes between reviews. You need governance and oversight over AI output, usage, sprawl, and development.

That needs continuous monitoring and real-time reviews.

Where Sprinto fits in an AI governance and compliance stack

Sprinto manages your entire GRC posture, spanning across SOC 2, ISO 27001, GDPR, and HIPAA, and extends those same capabilities, like continuous monitoring and automated evidence collection, into AI governance.

Instead of managing separate systems for GRC compliance and AI oversight, everything lives under one roof where controls, evidence, and trust already exist. Sprinto plugs into your entire tech stack and:

- Maintains a continuous AI inventory that surfaces shadow usage, mapped data flows, subprocessors, and guardrails tied to real compliance controls.

- Powers AI Trust Center that communicates governance posture through certificates and AI-tagged attestations, active models and deployment context, data handling and privacy diagrams, and documented safety guardrails.

- Automates evidence collection, framework mapping, and audit preparation, replacing manual documentation with continuously updated proof.

- Supercharges compliance workflows like answering security questionnaires, detecting gaps, and remediating with native AI capabilities.

From your first audit to a full GRC function to AI oversight, Sprinto AI powers every step of your compliance journey.

FAQs

AI governance tools help enterprises maintain oversight on the adoption, development, and usage of AI across their business. It enforces policies, monitors controls, and assesses and manages the risks associated with AI adoption. For audits, these tools automatically capture evidence of control performance and provide a clear audit trail to demonstrate compliance with the EU AI Act, ISO 42001, and more.

Not directly, but the expectations of some regulatory frameworks, such as the EU AI Act, which require continuous risk management, data governance, and documentation, make them unskippable. California’s ADMT regulations require written risk assessments for automated decision-making. ISO 42001 certification demands documented AI management systems. While no law mandates specific tools, the compliance requirements those laws create make governance platforms practically necessary.

Many tools in the market can help with EU AI Act compliance. For example, Sprinto helps you enforce your AI governance policies and monitor controls across the development and adoption lifecycle of AI in your enterprise. It comes with pre-built policy templates, an automatic policy to control mapping, and automatic evidence collection to build a clean, defensible audit trail/

MLOps and some AI governance tools are completely different technologies with overlapping outcomes. AI governance enforces policy and controls, as a function of monitoring AI usage and outcomes, and tracking data lineage across development and adoption of AI. MLOps focuses on operationalizing ML systems across their lifecycle, including versioning, training, and performance monitoring. Governance tools tell you if AI is compliant with the policies, and MLOps tools tell you how they perform.

Sucheth

Sucheth is a Content Marketer at Sprinto. He focuses on simplifying topics around compliance, risk, and governance to help companies build stronger, more resilient security programs.

Explore more

research & insights curated to help you earn a seat at the table.