AI governance has moved from a niche discussion to a boardroom priority almost overnight.

Across U.S. organizations, AI is now embedded in daily workflows, from software development and analytics to customer support and decision-making. CISOs no longer ask if AI introduces risk. They assess whether their organizations are equipped to manage it.

The current trend is clear: CISOs in U.S. organizations are aware of AI risks, but many are not fully prepared to mitigate them because their AI Governance stacks have not kept pace with adoption.

The good news? CISOs know it, and they’re investing to fix this in 2026.

| 👉 Download the AI-risk Pulse Check Report to benchmark your AI Governance maturity, compare your organization against peers, and start the internal conversations needed to strengthen your AI Governance stack in 2026. |

Here is what CISOs need to know in 2026:

1. AI risk has officially entered the incident log

AI risk is no longer theoretical. More than 30% of surveyed U.S. organizations experienced a major AI-related security incident in the past 12 months. These incidents were not edge cases or experimental failures. They were tied to everyday operational behavior.

Common AI risk scenarios in 2026 include unapproved employee use of AI, exposure of sensitive data through public AI tools, misuse of AI APIs, and integrity issues arising from compromised or unvalidated inputs. These patterns reveal a fundamental truth: AI risk does not necessarily emerge from malicious intent. It often stems from gaps in governance and control enforcement.

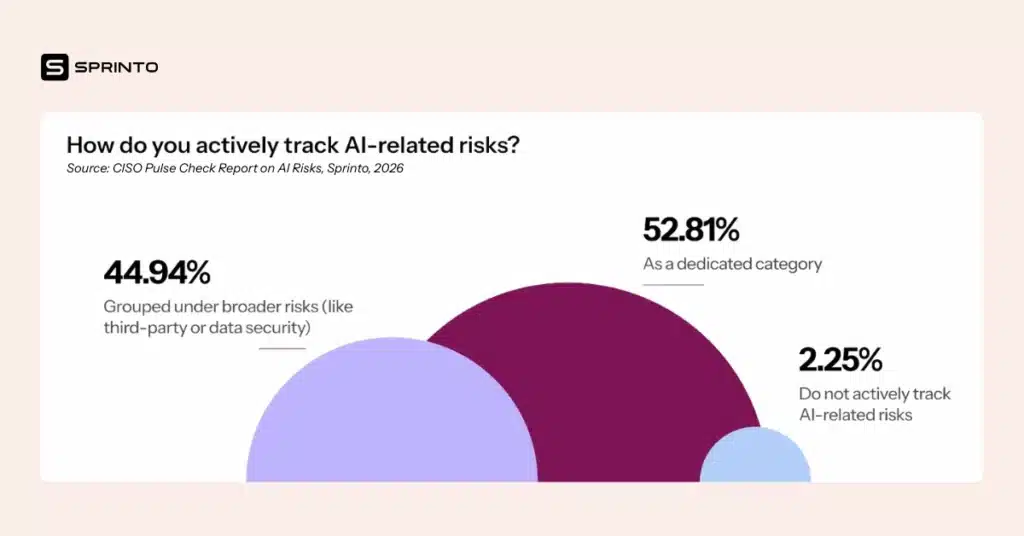

This is why many CISOs have begun treating AI risk as its own category.

Over 52% of organizations now track AI-related risks as a dedicated risk domain, rather than grouping them under traditional areas like third-party or data security.

This trend reflects growing AI risk maturity at the awareness level. But awareness alone does not stop incidents, nor does it mitigate risk.

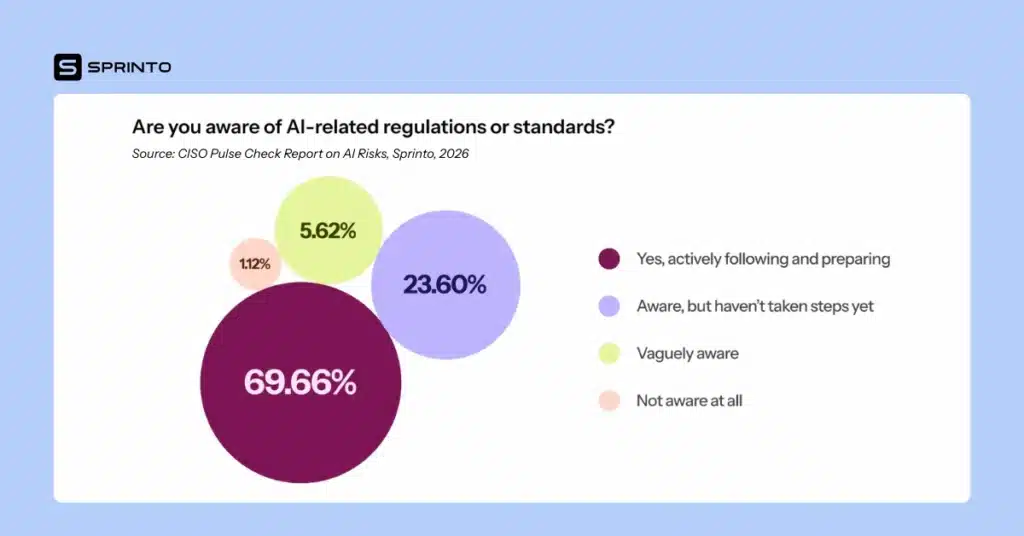

2. AI compliance awareness is high, but operational readiness is not

Regulatory pressure has accelerated this shift. Nearly 70% of CISOs report being actively aware of and preparing for AI-related regulations and standards. Frameworks governing AI accountability, transparency, and risk management are no longer optional. They are shaping security roadmaps.

AI compliance, however, is not just about understanding requirements. It’s about being able to demonstrate them continuously. And this is where many organizations struggle. Traditional compliance programs rely on static assessments, periodic reviews, and manual evidence collection. AI governance requires something different: continuous oversight, faster deployment of controls, and centralized visibility across tools, data, and decisions.

Without this, compliance efforts become brittle. Evidence lives in silos. Ownership is unclear. And every audit feels like starting over.

3. The AI Governance enforcement gap needs to be reduced

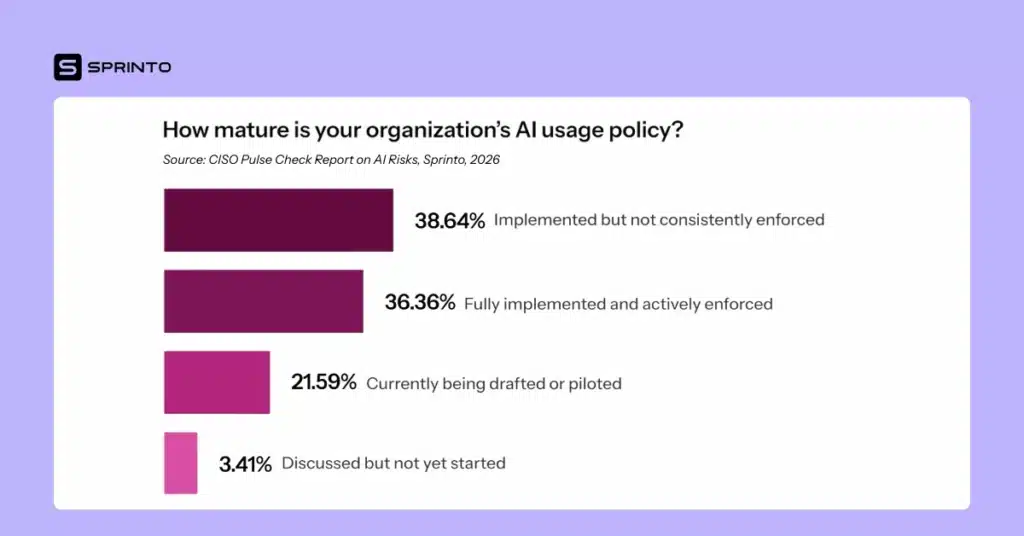

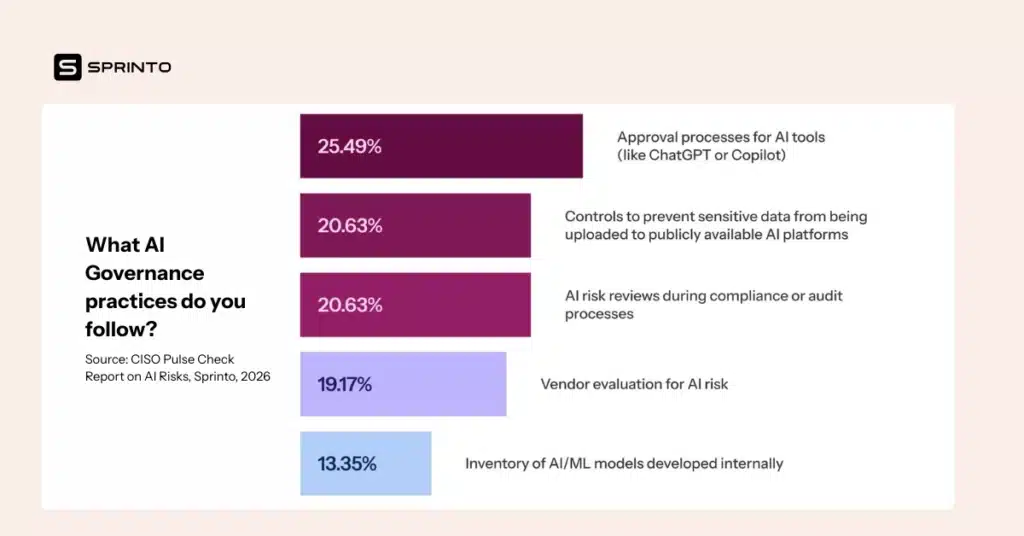

One of the most concerning findings from the report highlights a core weakness in many AI governance stacks: policy enforcement.

Nearly 39% of organizations have an AI usage policy that is not consistently enforced. On paper, this may look like progress because policies exist and guidelines are documented. In practice, inconsistent enforcement creates the perfect conditions for shadow AI usage and unmanaged risk.

Employees adopt AI tools because they increase speed and productivity. When approved workflows are unclear or slow, teams work around them. Training alone cannot stop this. AI governance must be enforced by design, not dependent on perfect human behavior.

This gap becomes even more dangerous when sensitive data is involved.

4. Identifying the most preventable AI risk is key to success

Only 21% of organizations have controls in place to prevent sensitive information from being uploaded to publicly available AI platforms. This is one of the most preventable and potentially damaging AI risks organizations face.

Once sensitive data is shared externally, organizations lose control over retention, reuse, and downstream exposure. From an AI compliance standpoint, this introduces regulatory, contractual, and reputational risk that cannot be fully undone.

This is not a training problem. It’s an AI governance stack problem. Preventive controls, such as data loss prevention, approved AI environments, and access restrictions, are what separate intent from outcome.

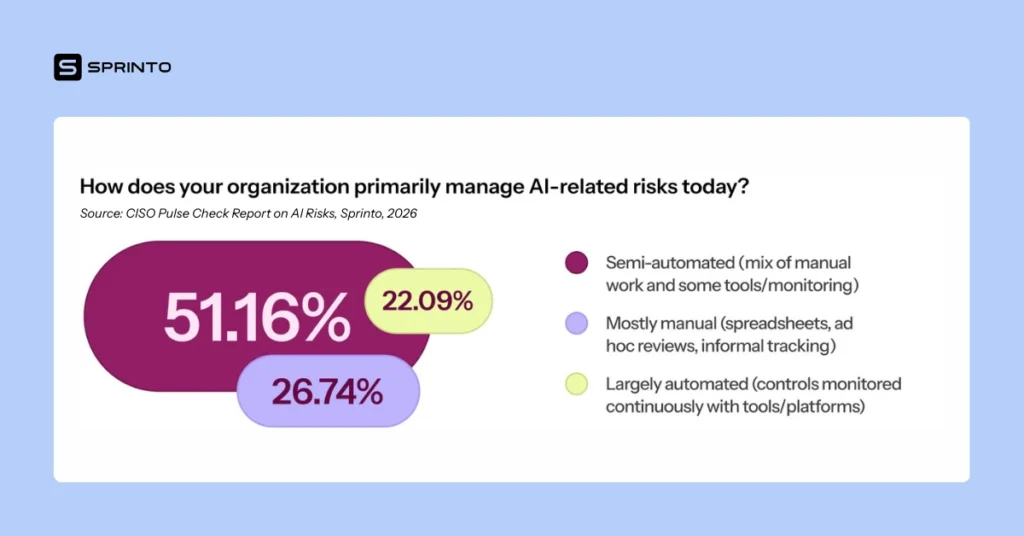

5. Manual AI Governance is not scalable for continuous compliance

AI adoption moves quickly. Governance processes, in many organizations, do not.

More than 26% of organizations still manage AI-related risks primarily through manual processes, including spreadsheets, ad hoc reviews, and informal tracking. Even among organizations that describe their approach as semi-automated, critical steps like risk assessment, evidence collection, and remediation remain heavily manual.

The impact is measurable. Two out of three organizations take longer than a week to implement controls or policy changes after identifying new AI risks. In an environment where AI tools and threats evolve daily, that delay creates exposure.

This is where AI risk maturity begins to stall, not because leaders don’t understand the risks, but because their governance infrastructure cannot move fast enough.

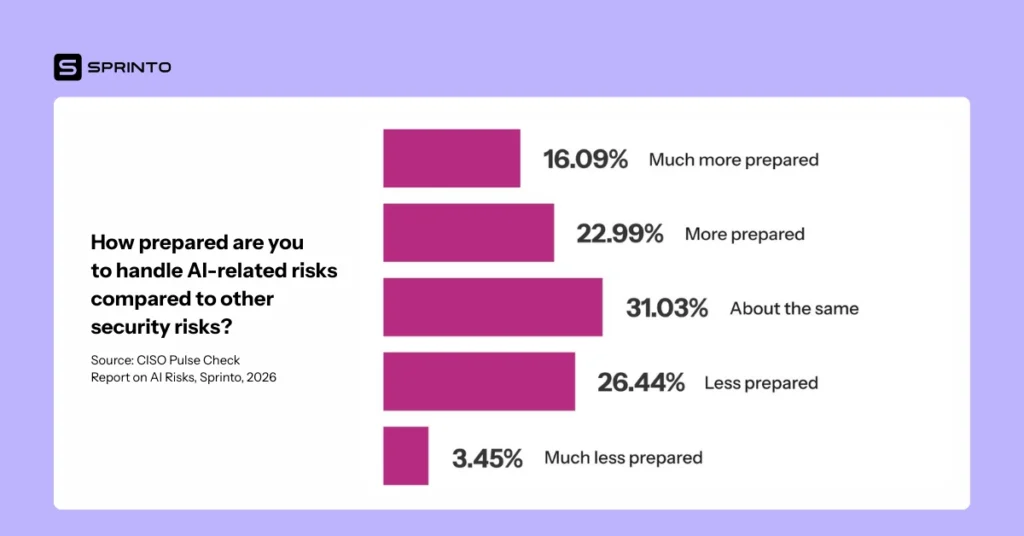

6. AI risk mitigation feels harder, but it doesn’t need to be

When compared to other security domains, AI stands out as uniquely challenging. Roughly 30% of CISOs say they feel less prepared to handle AI-related risks than other security risks.

That lack of confidence is not accidental. AI blurs boundaries between data security, third-party risk, software development, and decision governance. Most governance stacks are optimized for clearly defined categories. AI cuts across all of them.

Without a unified AI governance stack (a system of record that connects risk, controls, evidence, and accountability), organizations remain reactive when they need to be proactive.

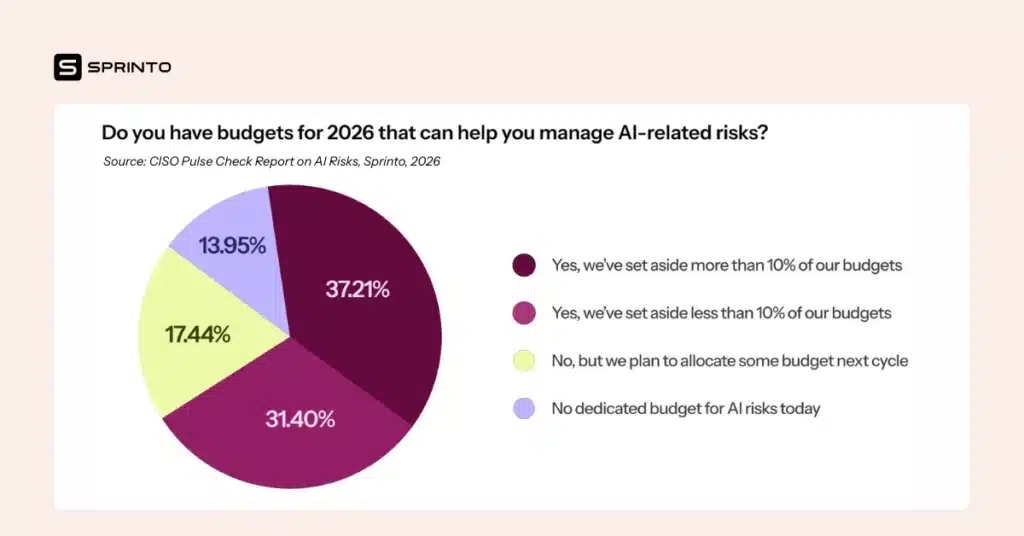

7. 2026 is the turning point for AI Governance investment

Despite these challenges, the data points to a clear inflection moment. Nearly 69% of organizations have already allocated budget for AI risk mitigation in 2026, with additional organizations planning to do so in the next budget cycle.

Budget allocation signals commitment. And CISOs are being deliberate about where that investment goes: strengthening technical controls, formalizing AI risk assessments, improving employee enablement, and automating AI compliance activities.

Only about 25% of organizations currently describe their AI risk maturity as “advanced”. Most are still developing foundational capabilities. But unlike previous years, CISOs now recognize that incremental fixes won’t be enough.

8. Building an AI Governance stack is the real constraint

Across all findings, one theme repeats: the limiting factor is not awareness, intent, or even funding. It is the AI governance stack itself.

Disparate tools, fragmented workflows, and manual evidence collection slow everything down. Teams duplicate effort. Institutional knowledge gets lost. Controls are tested repeatedly, or not at all. Compliance becomes reactive rather than continuous.

Without a centralized system of record, AI governance turns into a scavenger hunt. With intelligent automation and integrated workflows, it becomes an operational advantage.

From AI Risk awareness to AI Risk readiness

CISOs have crossed the first threshold. AI risk awareness is now table stakes. The next phase, AI risk maturity, is all about execution.

Organizations that succeed in 2026 will not be the ones with the most AI use cases. They will be the ones with AI governance stacks designed for speed, scale, and proof, where controls are enforceable, evidence is automatic, and risk is visible in real time.

Everyone else will still be explaining why policies existed, but enforcement didn’t.

Looking for more insights into the current state of AI Risk mitigation? Download the CISO Pulse Check Report on AI Risks by clicking on the image below👇

Pulkit Jain

Pulkit drives growth through Content at Sprinto. His work has been featured in top publications such as Forbes, The Wall Street Journal, World Economic Forum, e27, and more. His experience as an m-shaped B2B marketer comes fueled with a passion for customer-centricity, affinity for data, and a love for technology, movies, comics, and gaming.

Explore more

research & insights curated to help you earn a seat at the table.