A year ago, your vendor risk assessment probably didn’t include a single question about AI. Today, that gap is one of the biggest blind spots in your third-party risk management program.

AI is no longer just a tool your employees use internally. It now lives inside your vendor ecosystem, embedded in the SaaS products you already approved and powered by third-party models you may not have evaluated. So the risk is not limited to how your team interacts with LLMs. The deeper concern is how your data flows through layered AI dependencies across every vendor relationship you manage.

If you run a GRC program, you have likely felt this already. The vendors you onboarded 18 months ago look different today. Many of them have quietly added AI capabilities or started routing customer data through inference pipelines that did not exist when you first assessed them. And unless you have specifically asked, most of them have not told you.

In this blog, I break down the specific AI risks that are emerging from third-party platforms, and how you can prepare better to mitigate them..

Every Vendor Is Now an AI Vendor

Across industries, SaaS vendors are embedding AI features into their core products at an unprecedented pace. Your CRM now has an AI assistant, your HR platform uses AI for resume screening, and your customer support tool likely runs on a large language model. But the problem is that most of these AI additions happened after your initial vendor assessment.

You probably evaluated the vendor for data security and compliance certifications. What you did not evaluate is which AI models they use, whether your data contributes to model training, or what data retention policies apply after AI processing. As a result, there is a growing disconnect between the vendor you approved and the vendor you are actually working with today.

That question is now central to your TPRM program, whether your current questionnaire asks it or not.

The Six Core AI Risks in Your Vendor Ecosystem

1. Unauthorized Data Training

When a vendor integrates an AI model, your data may be used to train or fine-tune that model unless the vendor has explicit contractual restrictions in place. Many enterprise AI plans include opt-out provisions for model training, but the default setting often allows it. So if your vendor is on a standard plan or uses a third-party model provider without negotiated terms, your customer data could contribute to a model that serves your competitors.

You probably already negotiate data processing agreements with your vendors. The new requirement is to ensure that those agreements explicitly address AI model training and data retention post-inference. Without that language, you are relying on defaults that may not protect you.

2. Subprocessor Opacity and Model Provider Chains

Traditional vendor assessments evaluate the vendor you contract with directly. But AI introduces a new layer of supply chain risk. Your vendor may use an AI model from one provider while hosting inference on another provider’s infrastructure entirely. Each of these relationships introduces a subprocessor that your current due diligence process may not account for.

| AI governance should be treated as a supply chain risk issue, not just an internal policy matter. The real risk is not just employee usage, but how data flows through layered AI dependencies that sit several relationships removed from your own. |

Consider this scenario: a vendor you approved last year adds an LLM-powered feature that routes your data to a new model provider. Without reassessment, your risk posture silently changes. You are now exposed to a subprocessor you never evaluated, operating under terms you never reviewed. And because the vendor was not contractually required to disclose this change, it may go undetected for months.

3. Shadow AI Adoption Outpacing Security Review

Your engineering and product teams are adopting AI tools faster than your security team can assess them. Across fast-growing companies, procurement cycles for AI tools have compressed from weeks to days, which means that by the time a new AI vendor reaches your TPRM queue, it may already be processing production data.

Traditional TPRM assumes that security review happens before vendor adoption. In most organizations today, it happens after. Therefore, your program needs to account for that inversion, not just in policy, but in how you detect and respond to unapproved AI tool usage.

4. Stale Assessments and Silent Risk Changes

Vendor risk profiles are not static, yet most TPRM programs treat them that way. When you assessed a vendor 12 months ago, they may not have had any AI capabilities. Today, that same vendor might be running multiple AI models and processing your data in a geography you did not originally approve.

Material AI changes don’t always get surfaced in a timely or structured way between you and your vendors, and they aren’t always tracked consistently either. Unless your contract requires it, and unless your reassessment cadence is frequent enough to catch it, these changes go undetected. As a result, your risk register still shows the vendor as “low risk” based on an assessment that no longer reflects reality.

| A vendor that was low-risk in January may be materially different by July. If your TPRM program relies on annual assessments, you are making decisions based on information that is 12 to 18 months stale. |

5. Standard Questionnaires Are Not Built for AI

If you are still using the same vendor questionnaire template from two years ago, it almost certainly does not cover the questions that matter most for AI risk. You need to know which models the vendor uses, whether customer data is used for training, where inference happens, and what the post-processing retention policy is. But these questions are simply absent from most inherited templates.

And even when you do ask, the answers require a level of technical interpretation that a generic risk score cannot capture. This means your questionnaire needs to evolve alongside the technology your vendors are adopting.

6. Regulatory and Insurance Pressure

AI governance is no longer optional from a regulatory standpoint. Frameworks such as the EU AI Act and ISO 42001 are creating new obligations for organizations that use AI, including embedded AI on third-party platforms. So if your vendor uses a high-risk AI system as defined by these frameworks, the compliance obligation may extend to you as the deployer.

Insurance carriers are also paying attention. In recent conversations, teams have reported that insurers are not just asking whether AI is being used, but how it is governed. This scrutiny is arriving before formal programs or standards are fully in place, which means your ability to demonstrate that you assess and monitor AI risks across your vendor ecosystem is already becoming a factor in your coverage and premiums.

What the Data Tells Us

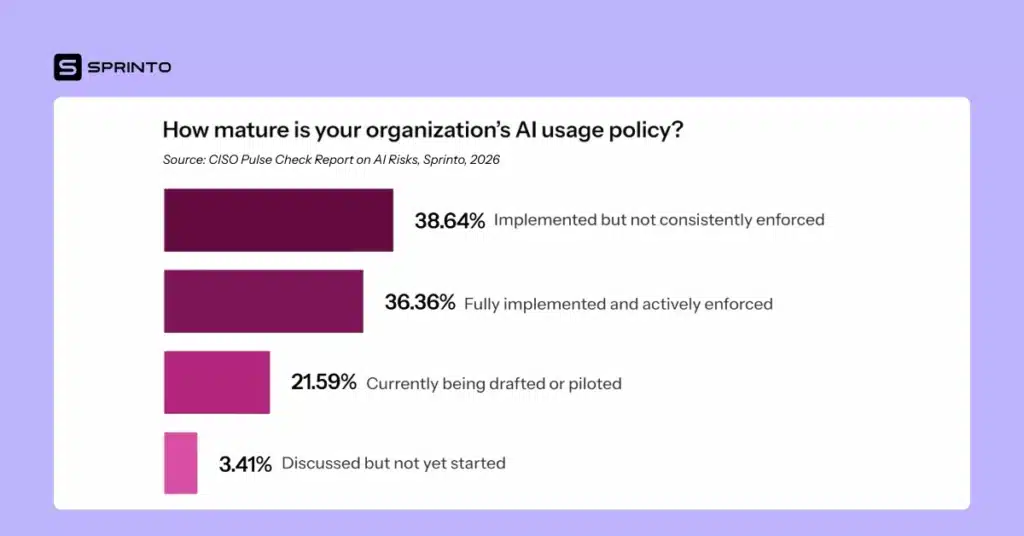

Sprinto’s 2026 CISO Pulse Check Report, based on a survey of 103 US-based CISOs across SaaS, Fintech, Healthcare, and Manufacturing, paints a clear picture of where the market stands.

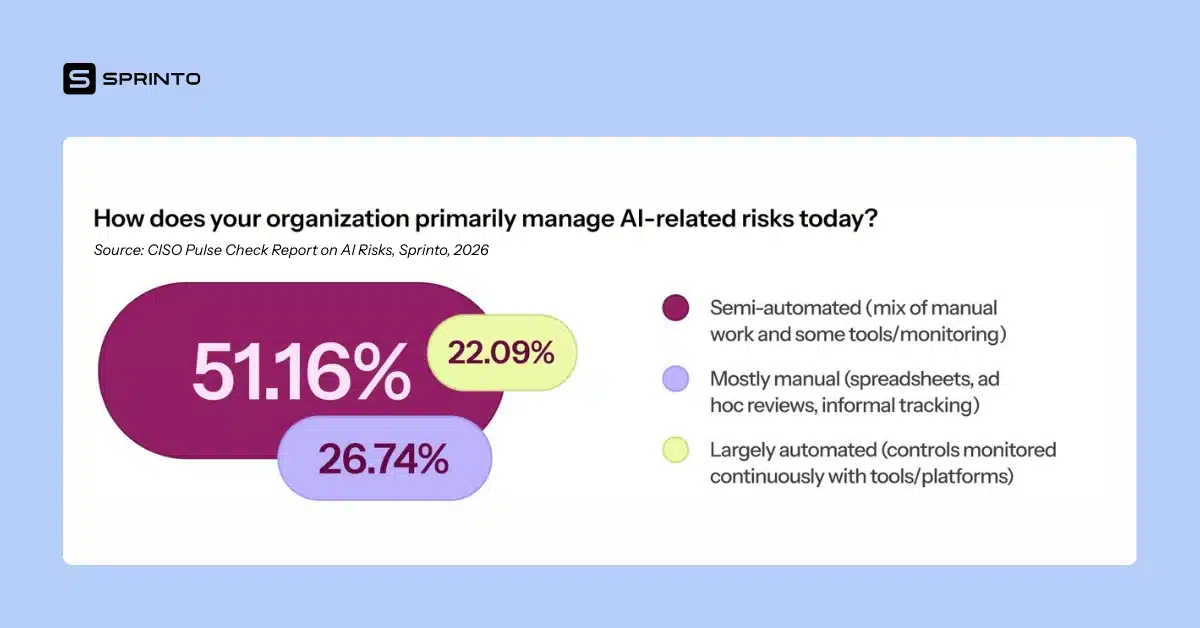

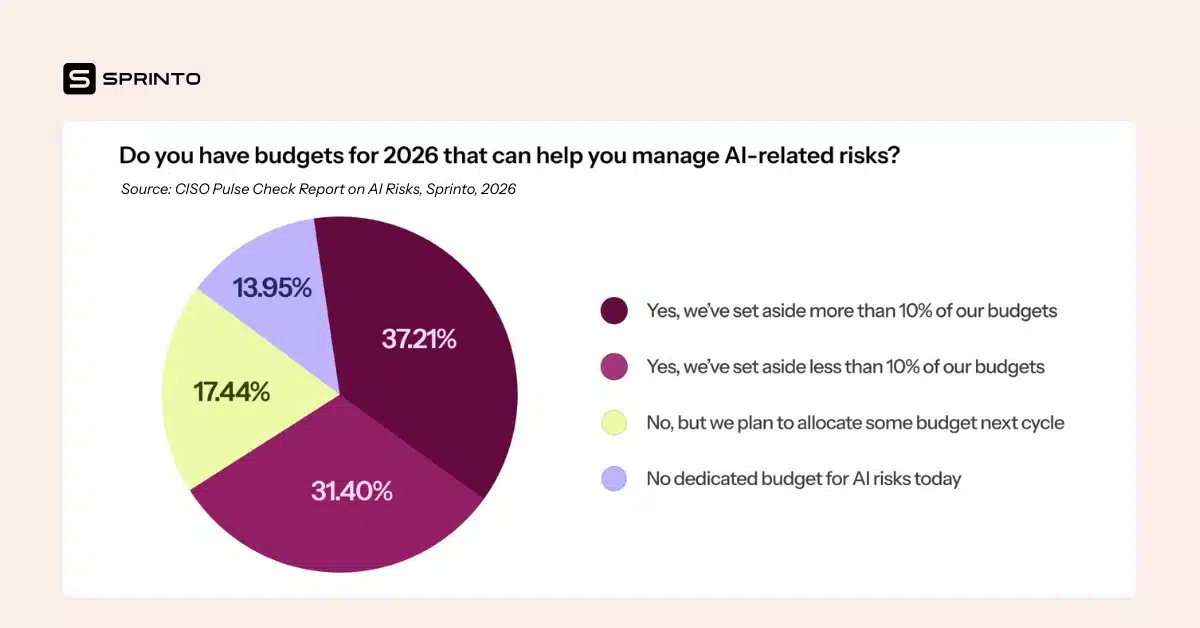

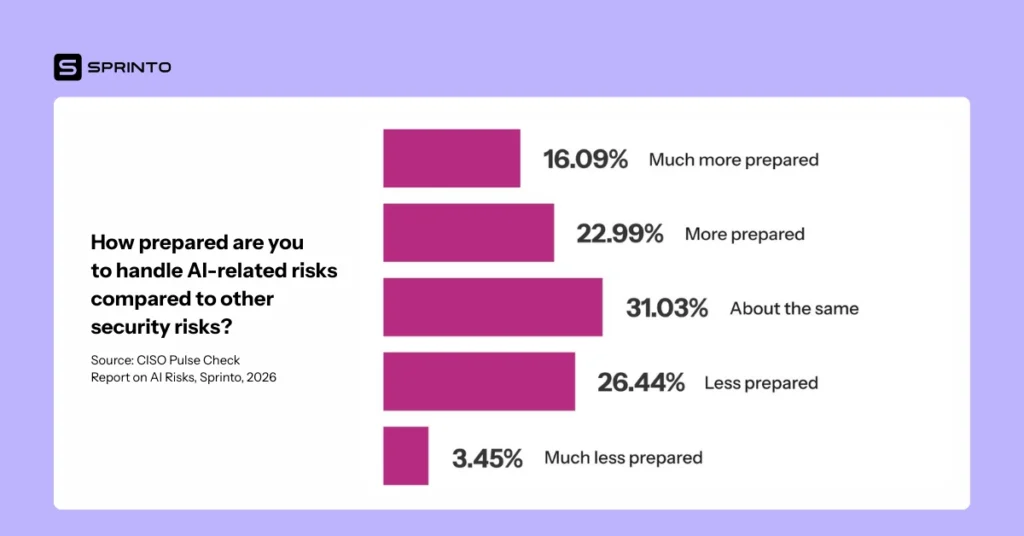

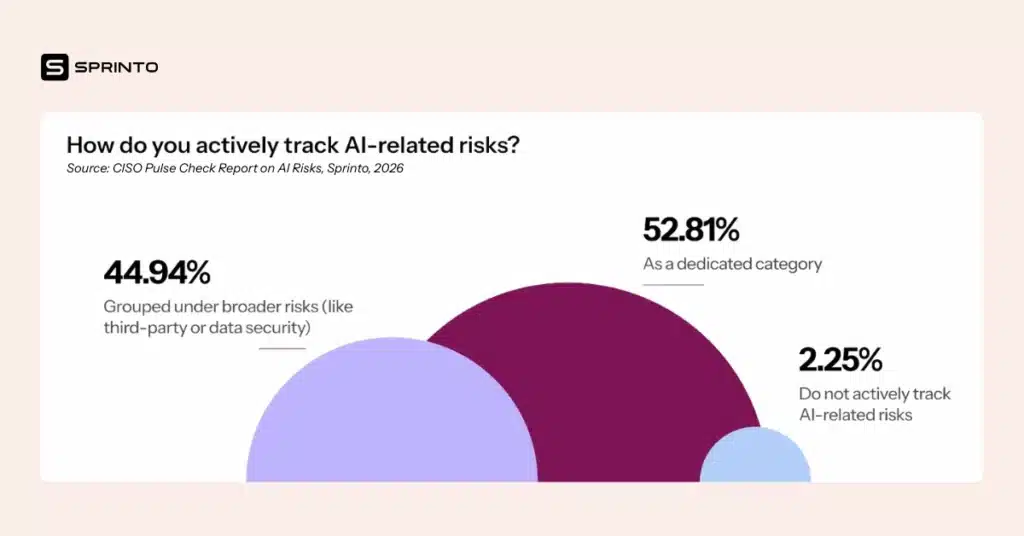

Over 30% of organizations have reported a major AI-related security incident in the last 12 months. Meanwhile, 53% of organizations now track AI as a dedicated risk category, but 39% do not consistently enforce AI usage policies. On the operational side, 27% of organizations still manage AI risk mostly manually, and two out of three take longer than a week to implement changes after identifying an AI risk. That said, 70% of organizations have dedicated budgets in 2026 to invest in building their AI governance stack.

The pattern is consistent: awareness is high, but execution is lagging. And the gap between knowing about AI risk and actually managing it is exactly where incidents happen.

What You Should Be Doing Now

If you are responsible for TPRM at your organization, here are the practical steps to close the AI risk gap in your vendor ecosystem.

- Update your vendor questionnaire to include AI-specific questions. At a minimum, you should be asking every vendor whether they use AI or machine learning in their product, what models they use and who provides them, and whether customer data is used for model training. You also need to understand where inference processing occurs and whether their AI capabilities have changed since your last assessment.

- Add AI clauses to your contracts and security addenda. Your data processing agreements should explicitly restrict the use of your data for model training and require disclosure of material changes to AI capabilities or model providers. This gives you the contractual right to reassess when those changes occur, rather than discovering them after the fact.

- Reassess vendors that have added AI capabilities since onboarding. Do not wait for the annual review cycle. If a vendor has materially changed its AI posture, that warrants a reassessment now. Treat AI like any other evolving third-party dependency: the longer you wait, the wider the gap between your risk register and your actual exposure.

- Build visibility into AI tool usage across your organization. Many teams are starting with browser-level monitoring or SSO activity logs to understand which AI-enabled services are actually being used. Web traffic logs are a practical starting point, and over time, you can mature to monitoring endpoint telemetry, API activity, and SaaS usage signals.

- Tier your vendors based on AI risk. Not every vendor with AI capabilities poses the same level of risk. A vendor that uses AI for internal analytics is fundamentally different from one that processes your customer data through a third-party LLM. So your tiering criteria should now factor in data sensitivity in the context of AI processing, the vendor’s model provider chain, and whether AI usage is core to the service or peripheral.

The Bottom Line

Third-party AI risk is not a future problem. It is a current one. The vendors in your portfolio today are not the same vendors you assessed a year ago. AI capabilities have been added, model providers have changed, and data flows have been rerouted, often without any notification to your security team.

But here is the good news: you already know how to manage this type of risk. Vendor reviews, contractual controls, reassessment workflows, risk tiering. These are all familiar tools. What has changed is the scope of what they need to cover. AI is now a first-class risk category in your vendor ecosystem, and your TPRM program needs to treat it that way.

Author

Srikar Sai

As a Senior Content Marketer at Sprinto, Srikar Sai turns cybersecurity chaos into clarity. He cuts through the jargon to help people grasp why security matters and how to act on it, making the complex accessible and the overwhelming actionable. He thrives where tech meets business.Explore more

research & insights curated to help you earn a seat at the table.