TL;DR

| The EU AI Act is, at its core, a product-safety law for AI, not another data-protection law. The focus is on intended purpose, risk classification, controls, and evidence, not just data handling. |

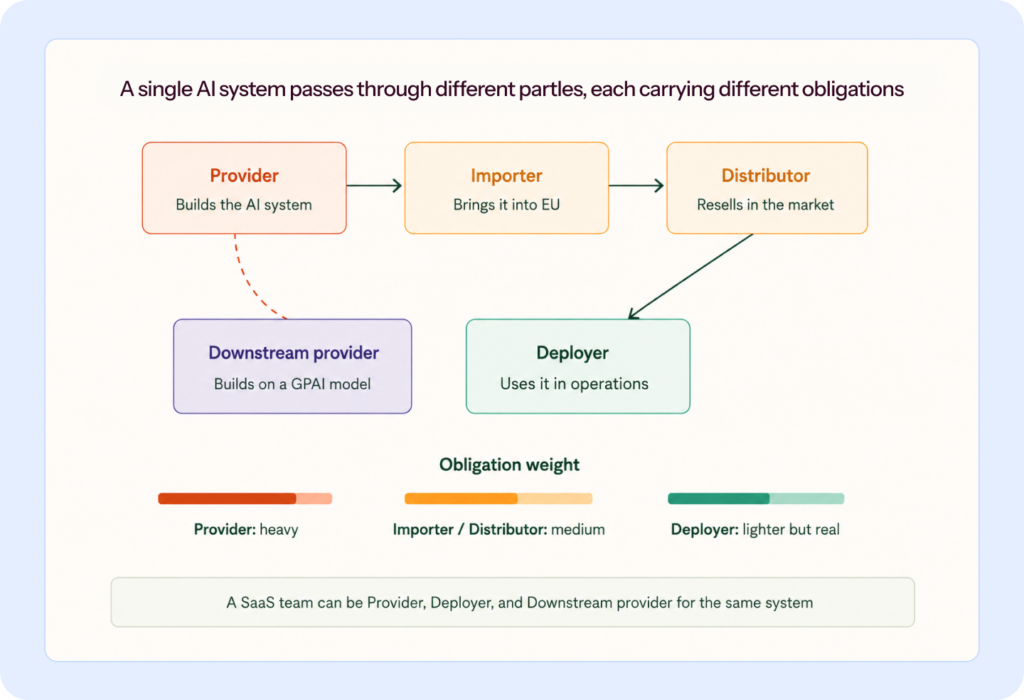

| Your obligations depend on your role in the AI value chain (provider, deployer, importer, distributor, or downstream provider), not just on the AI itself. |

| If you’re already running SOC 2 or ISO 27001, you have most of the scaffolding. What’s missing is AI-specific: an inventory that catches shadow tools, a defensible classification per system, and continuous monitoring that survives model swaps. |

The EU AI Act is the hardest compliance obligation most SaaS leaders will face this decade, and the rules are still being rewritten as the deadline approaches. Prohibited practices have been in force since February 2025. AI literacy obligations are already live. High-risk obligations phase in through 2026 and 2027. Meanwhile, lawmakers are negotiating amendments that may shift key deadlines, but until they pass, the original ones hold.

Here is how it plays out in practice: a US-based fintech can route customer transaction data to a US-hosted fraud detection model with relatively light scrutiny. An identical fintech serving EU customers cannot. The GDPR’s cross-border transfer rules are part of the reason. The EU AI Act layered on top is the rest of it. Same feature, same code, yet radically different compliance burden.

If you’re running a business that embeds OpenAI, Anthropic, or Google models and has European customers, this might well apply to you. This article walks through what the Act actually requires, your specific obligations depending on your role, and how to comply without breaking your product velocity.

What is the EU AI Act?

The EU AI Act is the world’s first comprehensive, horizontal law regulating artificial intelligence. It classifies AI by risk, banning some uses outright, imposing strict obligations on high-risk systems, requiring transparency for limited-risk systems like chatbots, and leaving most everyday AI alone. It applies to public and private actors, inside and outside the EU, when they place an AI system or general-purpose AI model on the EU market, put an AI system into service, or use it in the EU. The law entered into force on August 1, 2024, with provisions phasing in over several years.

You do not need an EU office to be in scope. If your product reaches EU users, or if your AI output is used in the EU, the Act may apply to you.

Is the EU AI Act similar to GDPR?

No, and treating it as GDPR’s successor is the first mistake your compliance program can make.

GDPR regulates what you do with personal data. The AI Act regulates AI systems as products placed on the European market, using the same regulatory architecture the EU has applied to cars, medical devices, and machinery for decades. That distinction changes which muscles you need: conformity assessment, technical documentation, and post-market monitoring, not privacy notices and lawful basis.

Think of it this way: if GDPR is a privacy policy you file, the AI Act is a crash test you pass. Before a carmaker sells a new vehicle in Europe, independent testing confirms the brakes work, the airbags deploy, and the crumple zones crumple correctly. The manufacturer keeps technical documentation proving it. After launch, defects are logged and reported. The AI Act applies the same playbook to AI systems.

| Dimension | GDPR | EU AI Act |

| Regulates | Processing of personal data | AI systems as products |

| Primary question | Is personal data handled lawfully? | Does the AI system meet safety and fundamental rights requirements? |

| Legal architecture | Rights-based | Product safety |

| Core obligations | Consent, lawful basis, DPO, DPIA, breach notification | Conformity assessment, technical documentation, CE marking, post-market monitoring |

| Applies to | Data controllers and processors | Providers, deployers, importers, distributors, downstream providers |

| Maximum fine | €20M or 4% of global turnover | €35M or 7% of global turnover |

The two laws coexist. If you are training AI on personal data, both apply. But building an AI Act program by extending your GDPR playbook will leave you exposed to the product safety dimensions that actually matter.

What EU AI Act compliance actually means

Over 30% of organizations have experienced a major AI-related security incident within the past 12 months.”

~ From 2026 AI Pulse Check Report.

EU AI Act compliance means meeting the legal obligations the Act imposes on your business based on two things: your role in the AI value chain and the risk classification of each system.

For instance, a company building its own AI-enabled hiring tool is not in the same position as one using an off-the-shelf internal note-taking tool. A SaaS team embedding a foundation model into its own feature is not simply a user; the model vendor sits upstream, and downstream obligations still apply.

Three terms, compliance, governance, and risk management, get muddled in these conversations, and keeping them separate matters:

- Compliance is meeting the binding legal obligations the Act imposes on you.

- Governance is the internal structure, policies, accountability, and roles that enable compliance.

- Risk management is the ongoing process of detecting and addressing AI-specific risks.

AI Act compliance is not a legal team project. Every enterprise practitioner I’ve tracked reaches the same conclusion: the work has to be cross-functional, or it fails.

“AI does not just introduce new risk; it amplifies familiar ones like bias, opacity, privacy issues, accuracy failures, and security vulnerabilities, which is why governance cannot be separated from ongoing risk management.” ~ Shea Brown, CEO at Babl.ai [An excerpt from Understanding ISO 42001 webinar].

Legal can interpret the text. But the people who know what AI is actually running in your organization are in product, engineering, HR, marketing, and support. The people who need to follow the rules day to day are developers and operators. If you hand this over entirely to lawyers, you’ll produce a policy document that no one will implement. If you hand it entirely to engineers, you’ll implement controls that don’t map to the law.

The teams that do this well often build a standing committee featuring legal, security, product, ML/data, and an executive sponsor. They meet, they argue, and they document. They treat compliance as a continuous obligation, not a one-time project.

Why early compliance matters, beyond avoiding fines

I’ll talk about fines later, but before that, I want to discuss three consequences that are bigger than fines for most companies:

- Access to EU funding is getting tied to compliance.

The EU’s Apply AI Strategy is a €1 billion deployment push targeting healthcare, manufacturing, energy, and public services. Practitioners have flagged deals in which businesses were invited to national pilots, conditional on AI Act alignment. If you’re selling to the EU public sector or regulated industry, the goalposts have already moved. - Enterprise procurement is catching up.

Financial sponsors are building AI risk assessment into deal playbooks. Large enterprise buyers are adding AI Act questions to vendor questionnaires. If you sell into EU enterprises, you can no longer afford to adopt a ‘wait-and-watch’ approach based on when enforcement starts. - Compliance is becoming a strategic differentiator, not just a cost.

The most sophisticated buyers aren’t waiting for regulators to act. They’re treating good governance as a proxy for a vendor being ready to scale.

The more useful opening question is not whether the AI Act applies to your company. It is, which of your AI use cases are in scope, what do they trigger, and what do you need to operationalize now?

“Customers have a lot of questions since we’re an AI company and the Trust Center helps make these discussions easier–we can share our policies, compliance reports, pentests, everything prospects need, at the click of a button.”

~ Deepak Singla, Founder & CEO, Fini AI

EU AI Act risk categories

Most AI systems fall into the lowest-risk bucket and face essentially no new obligations. The Act is built on a risk-based approach, but with a specific twist. It is not asking whether the AI could cause harm in the abstract. It asks whether the AI makes a consequential decision about a person in one of the contexts the law specifically calls out, such as employment, education, credit, necessary services, law enforcement, migration, critical infrastructure, and so on (Annex III lists them).

An AI that generates marketing copy or product images isn’t making decisions about anyone. An AI that screens job applicants is making a consequential decision in a listed context, so the heavy obligations apply.

The Act defines four tiers. Here they are, with what each means for you:

| Tier | What it means | Typical examples | What you have to do |

| Unacceptable risk | Banned outright | Social scoring systems, emotion recognition in workplaces or schools, manipulative subliminal AI, untargeted facial-image scraping | Don’t build or deploy these |

| High-risk | Heavy obligations | AI in resume screening, credit decisioning, education admissions, law enforcement, migration triage, critical-infrastructure control, AI safety components in medical devices | Full conformity assessment, risk management system, documentation, human oversight, registration in EU database |

| Limited-risk (transparency) | Article 50 transparency obligations | Customer support chatbots (e.g., Intercom Fin, Zendesk AI), deepfake-generation tools, AI-generated marketing imagery | Disclose that AI is involved; label deepfakes; watermark generative content |

| Minimal risk | No specific obligations | Spam filters, AI-assisted code completion (e.g., GitHub Copilot for internal use), in-game NPC behavior, recommendation engines for content discovery | Voluntary codes of conduct encouraged |

A given product can move between tiers depending on how it’s deployed. Facial recognition in your phone’s photo app is minimal-risk. The same technology used by a security-focused startup is high-risk or potentially banned. Classification follows use cases, not technology.

Plus one category that sits separately from the tiers:

General-purpose AI (GPAI) models: Base models like GPT, Gemini, Claude, and Mistral have their own obligations regardless of the tier above. Models trained using more than 10²⁵ FLOPs of compute are presumed to carry systemic risk, which triggers a stricter tier of obligations.

The high-risk classification is harder than it looks

This is where most implementations often go wrong. The Commission’s own classification guidelines, originally due on February 2, 2026, are still not out as of April 2026. Until they are published, you are working from the legal text, Annex III, and published law firm flowcharts.

The Article 6(3) escape clause is where you should spend time.

Even if your AI operates in a high-risk context, say, you built a tool that supports hiring decisions, Article 6(3) gives you four criteria for qualifying out of high-risk classification. Your system may not be high-risk if it performs a narrow procedural task, improves a previously completed human activity, detects decision-making patterns without replacing or influencing the human assessment, or prepares material for an assessment rather than performing the assessment itself.

For instance, if your AI’s role is narrow enough (ordering data alphabetically, preparing a summary, detecting patterns without driving the final decision), you may not be high-risk at all.

The current Act also requires you to register in an EU database if you claim this escape clause. This is a traceability measure that lets regulators see which companies are self-classifying out. The Digital Omnibus proposed to remove this registration requirement, but it’s one of the most contested changes in the whole package.

A red flag worth watching: If you are applying Article 6(3) to claim your system is not high-risk, document the reasoning the way a regulator would want to read it. Walk through each of the four criteria, state how your system performs against each one, and record the evidence backing your conclusion. A casual claim will not hold up; a structured walkthrough will.

EU AI Act obligations by role

Your obligations depend on your role in the AI value chain, not just on the AI itself. The same AI system imposes completely different duties on different parties.

Here are the roles the Act recognizes:

- Provider: Develops and places an AI system on the EU market (heaviest obligations)

- Deployer: Uses an AI system under its own authority (lighter but real)

- Importer: First party bringing a non-EU AI system into the EU market

- Distributor: Any other party in the supply chain making the system available

- Downstream provider: Informal but critical for SaaS: you build AI systems on top of third-party base models

You can play multiple roles simultaneously. If you develop an AI-powered hiring tool and use it internally to screen your own candidates, you’re both provider and deployer, each with separate obligations.

- Provider obligations: For a high-risk AI system, a provider must meet the substantive requirements in Articles 8–15 (risk management, data governance, technical documentation, logging, transparency, human oversight, accuracy, and cybersecurity), operate a quality management system under Article 17, complete a conformity assessment, create a declaration of conformity, affix the CE marking, register the system in the EU database, and monitor it after launch while reporting serious incidents. It’s a heavy list, which is why most of this article is really about figuring out whether you have to do any of it in the first place.

- Deployer obligations: These include using the system in accordance with the provider’s instructions, assigning human oversight, monitoring and logging, ensuring staff AI literacy, informing workers when AI is used in the workplace, and, for certain public-sector uses, conducting a Fundamental Rights Impact Assessment.

- Downstream provider: This is the role most SaaS companies will occupy, and the one the Act’s drafters are still clarifying. The GPAI Code of Practice, whose second draft was published in late 2025, is designed to give downstream providers the documentation and transparency they need from foundation model providers. If you embed OpenAI, Anthropic, or Google models in your product, treat this Code as your reading list.

As for ‘substantial modifications’, the Act treats you as a provider the moment you meaningfully change an AI system and put your name on it. Fine-tune a foundation model for your product? You’re likely a provider now. Rebrand a third-party AI as your own? Same answer. And provider obligations are heavy, including conformity assessment, technical documentation, registration, and liability for downstream use.

If your product strategy comprises white-labeling AI, this is worth mapping out with legal before, not after.

The AI literacy obligation (already in force)

Since February 2, 2025, providers and deployers have to ensure their staff have a sufficient level of AI literacy. This particular obligation is on the books today. However, the Digital Omnibus is revisiting it, and the final version may soften the standard, but the current rule still applies until amendments are passed.

Whichever version ends up being implemented, the principle is the same. You can’t deploy a high-risk AI system and hope your team figures it out. If the people running it don’t understand how it works, you’re carrying two risks at once: a compliance gap and a business one.

EU AI Act requirements: What businesses must do

If I strip the law down to basics, the requirements fall into a handful of recurring workstreams.

You need to know:

- Know what AI you have (inventory).

- Know what role you play for each system (provider, deployer, importer, distributor, or downstream provider).

- Classify risk with enough rigor to defend the decision.

- Apply controls that match the obligations triggered by that system and role.

- Produce documentation and evidence showing the controls are real.

- Monitor continuously, because your systems, vendors, and use cases will not sit still for your convenience.

For high-risk AI systems, the substantive requirements cluster around Articles 8–15:

- Article 9: Risk management system (continuous, not one-time)

- Article 10: Data governance: training, validation, and testing data must be “relevant, representative, free of errors, and complete”

- Article 11: Technical documentation (similar in scope to a CE marking technical file)

- Article 12: Automatic record-keeping (logs)

- Article 13: Transparency and information to deployers

- Article 14: Human oversight

- Article 15: Accuracy, robustness, cybersecurity

Plus separate obligations for registration, post-market monitoring (Article 72), incident reporting, and conformity assessment.

The unpleasant truth about these requirements is that they’re deliberately generic. Human oversight means something specific for a hiring algorithm and something different for a medical device. The operational detail is supposed to come from harmonized standards written by CEN-CENELEC JTC21. Those standards were due by April 2025. They missed that deadline and are now targeting the end of 2026.

Now, you cannot claim that the standards aren’t ready as a legal defense. Standards are a voluntary compliance pathway, not a precondition for the Act applying. If you go to court and argue you weren’t ready because the standards weren’t published, you are likely to lose.

So what do you do in the meantime? You work from the Act’s text, the Commission’s evolving guidance, the codes of practice, and published law firm interpretations. You document your reasoning. You build your controls around the principles. When the standards land, you map your existing work to them.

EU AI Act compliance by industry

By now, you’ve got the Act’s fundamentals. It is product safety legislation; your obligations depend on your role and the risk tier of each system, and most systems won’t be high risk. What’s left is evaluating how it applies to the specifics of your industry, because the same rules land very differently depending on what you build.

- Financial services: This segment may get hit hardest in visible ways. Credit scoring and AI-powered lending are explicitly listed as high-risk under Annex III. Fraud detection models regularly straddle the line. Add DORA, NIS2, and industry-specific regulators, and financial services firms are managing the densest overlap of any industry.

- Healthcare and medical devices: This industry carries the heaviest combined burden: EU MDR, GDPR’s special-category data rules, and the AI Act’s high-risk requirements all stack. The Act’s interplay with MDR/IVDR is one of the specific implementation issues the Digital Omnibus is trying to clarify, with mixed success so far.

- Critical infrastructure, education, automotive, and law enforcement: These industries all have explicit Annex III or Annex I exposure and follow the standard high-risk compliance path.

- HR tech: Another segment that has an equally direct path to high-risk classification. Resume screening, performance evaluation, and interview scoring all fall under Annex III. A single product feature can push you from compliance-lite SaaS to a full provider with conformity assessment. This is where Article 6(3) analysis matters most: the line between AI that supports the hiring decision and AI that drives it is the line between two very different compliance programs.

EU AI Act for SaaS businesses

Third-party AI is not a one-time vendor review. Say a vendor you approved last year adds an LLM-powered feature that routes your data to a new model provider. Without reassessment, your risk posture changes silently. You are now exposed to a subprocessor you never evaluated, operating under terms you never reviewed. And because the vendor was not contractually required to disclose the change, it may go undetected for months.

Sprinto’s autonomous TPRM is built around exactly this: it discovers vendors as they enter your environment, scores exposure based on what data they actually touch, and re-runs due diligence when a dependency changes, not when an annual review comes up.

That is the reality most SaaS compliance programs are not built for. The AI Act does not carve out a special category for your situation; it asks the same questions it asks any other business, but the answers depend heavily on what you actually ship and where the AI sits in your product.

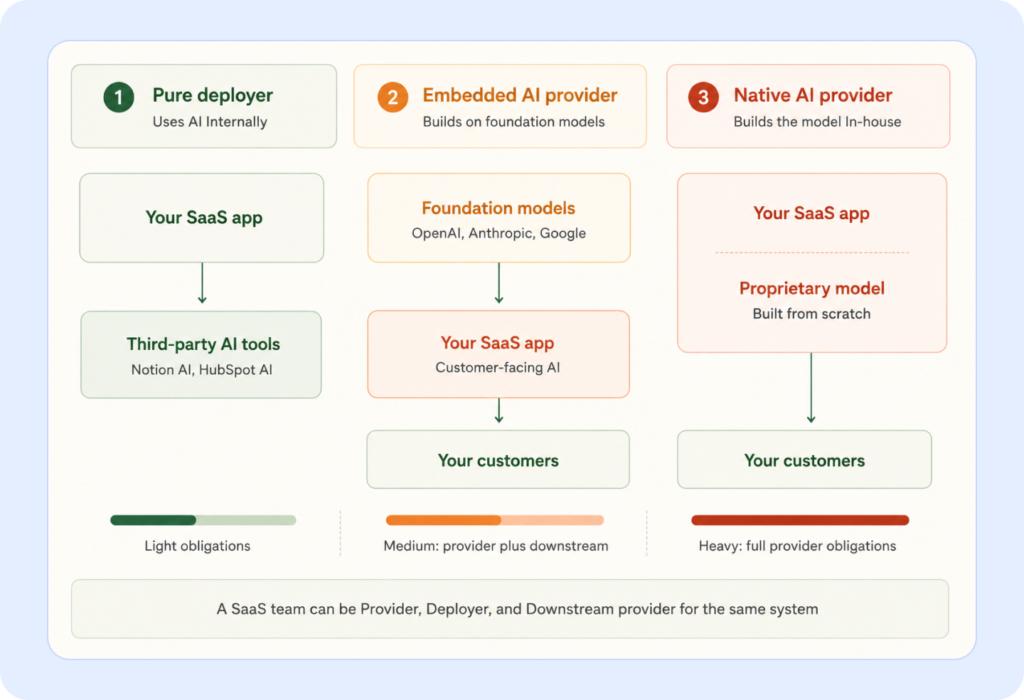

Three archetypes cover most of these real-world cases.

Archetype 1: Pure deployer.

You may be using off-the-shelf AI tools internally, such as HubSpot AI, Notion AI, and an AI coding assistant. Basically, you’re not building AI features into your product.

Your obligations are lightest. Use tools according to provider instructions, assign human oversight, roll out AI literacy training, log incidents, and disclose AI in the workplace. That’s most of it.

Archetype 2: Embedded AI provider.

You build product features on top of third-party base models, OpenAI, Anthropic, Google, and Mistral. This is where most modern SaaS lives.

You’re both a provider (of your own product) and a downstream provider (of the GPAI model). You need your own provider obligations, plus a working relationship with your model vendor that provides adequate documentation. The GPAI Code of Practice will clarify what you should expect from them. Your contracts need to reflect it.

Archetype 3: Native AI provider.

You build proprietary models or AI systems from the ground up.

You’re a full provider with all the obligations, conformity assessment, technical documentation, registration, and if you cross the high-risk threshold, CE marking. This is the heaviest compliance burden in the Act.

When your model provider sits outside the EEA

ChatGPT, Gemini, and Copilot are all US-operated. Every time your product sends European customer data to one of these services, it’s a cross-border data transfer under GDPR Chapter V. The GDPR side of that is already demanding. The AI Act imposes additional obligations.

You have three architectural patterns for dealing with this:

- EU-region enterprise services with data-processing agreements: Use Azure, Google Cloud, or AWS in EU regions, with DPAs that prohibit training on your data and restrict processing to the EEA. The EU-US Data Privacy Framework grants adequacy for necessary transfers.

- Bring the model to the data: Run open-source or enterprise models inside your own environment, private cloud, on-premise, or VPC. Data never leaves your controlled perimeter. This is increasingly standard for healthcare, finance, and legal tech SaaS.

- Hybrid with strict data minimization: Process sensitive data locally, and send only de-identified or aggregated signals to external AI services.

Warning signs in SaaS compliance right now

- Consent is enough: A dangerous myth. The European Data Protection Board has stated formally that consent-based transfer derogations under GDPR Article 49 are intended for exceptional, one-off transfers. Routine uploads to a US-hosted AI service do not qualify. You need a proper transfer mechanism: participation in the EU-US Data Privacy Framework, Standard Contractual Clauses, or Binding Corporate Rules.

- Vendor compliance transfers to you: It does not. Your vendor’s compliance covers their obligations. You have your own obligations as a downstream provider, and these do not flow through.

- Chatbots without AI disclosure: A cheap, avoidable miss. Build it into your UI by default.

- Fine-tuning without understanding the consequences: Substantial modification may make you the provider for the modified system. That shifts the compliance burden onto you.

- Assuming anonymization is easier than it is: Metadata, outliers, rare edge cases, and background context can all enable re-identification. GDPR’s bar for anonymity is high: the risk of re-identification must be negligible, and you need a formal assessment.

Customer-facing transparency requirements

Article 50 governs transparency for limited-risk AI systems. Four requirements to plan for:

- Chatbots must disclose that they are AI, unless it is obvious from context.

- Deepfakes must be labeled.

- AI-generated content in matters of public interest must be marked as such.

- Watermarking obligations apply to generative AI output.

These become applicable on August 2, 2026. The Digital Omnibus proposes a grandfathering clause giving systems placed on the market before that date an extra year. New launches after August 2026 get no grace period. The cheap miss is shipping the feature and bolting on the notice later. Build the disclosure into the UI by default.

Step-by-step EU AI Act implementation guide

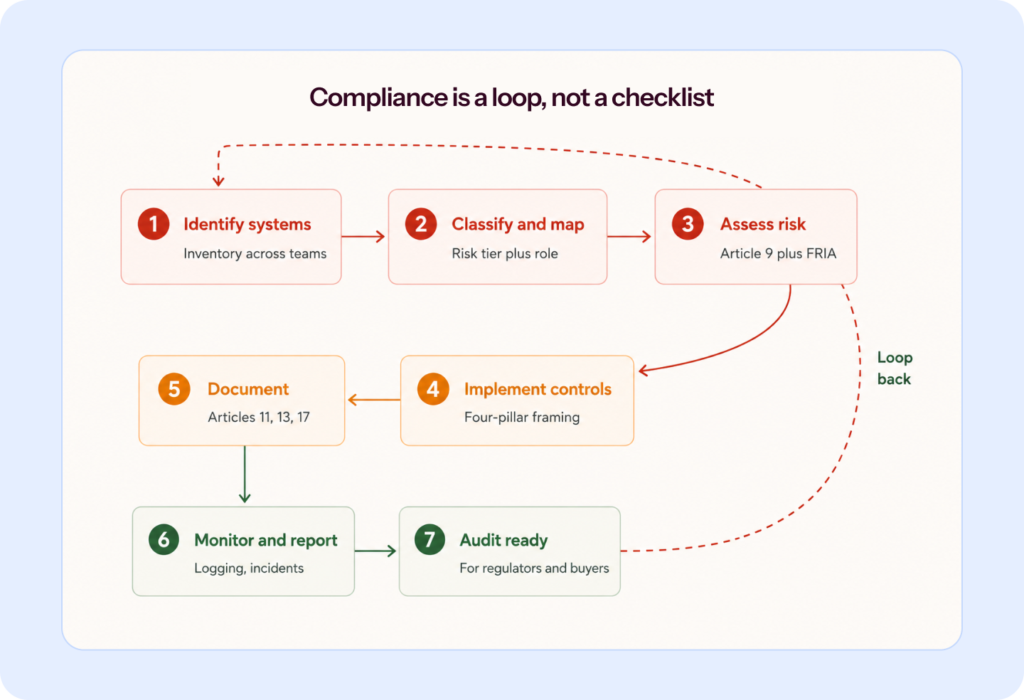

Based on conversations with practitioners and analysis of expert commentary, I’ve distilled EU AI Act implementation into seven steps. They won’t cover every edge case, but they’ll get you oriented.

Step 1: Identify AI systems and use cases

Start with an inventory. Not just bought tools. Not just model providers. Not just what procurement knows about.

Talk to product, engineering, security, IT, HR, support, marketing, legal, and operations. Ask what AI they use, what AI features are embedded in existing tools, what data those systems touch, and what teams have been trying informally.

Categorize what you find: built by us, bought off the shelf, embedded in vendor software, or shadow AI. Then create an intake process for new AI adoption. If the approval path is absurd, people will route around it.

The first operational question after inventory is not which tool to buy. It is who owns what. Mature programs start by deciding ownership across inventory, classification, policy updates, model documentation, vendor reviews, incident reporting, and training. Without that, the program gets patched together around milestone dates, then frays as soon as product teams speed up and the audit season ends.

Sprinto helps detect shadow AI, maintain a live AI inventory, and map tools to owners, vendors, and risk signals.

Discover shadow AI with Sprinto

Step 2: Classify risk

Work through each system against Annex III (high-risk use cases) and Annex I (product-regulated AI). For anything that could be high-risk, carefully work through the Article 6(3) criteria. Document the reasoning, not just the outcome.

This is where most gaps show up later. An auditor or regulator will want to see how you arrived at your decision, not just what you decided.

Step 3: Conduct risk and impact assessments

Step 3 is where Article 9’s risk management system obligation becomes operational. For certain deployers, particularly in the public sector, you’ll also need a Fundamental Rights Impact Assessment. The Danish Institute for Human Rights and the European Center for Not-for-Profit Law have published a five-phase FRIA guide with a downloadable template. Use it.

Treat both assessments as continuous processes tied to your software development lifecycle, not one-time exercises filed away.

Step 4: Implement required controls

This is where legal text becomes operating reality. You can adopt a four-pointed approach for this:

- Transparency: Can you explain why the AI made a decision?

- Accountability: Is there a named human responsible?

- Fairness: Is the training data unbiased?

- Safety and security: Are you protected against prompt injection, data leakage, and IP exfiltration?

For each point, define specific checks and, where possible, run them automatically in your deployment pipeline. The goal is compliance-as-code: rules encoded into the tools developers already use, so the compliant path is also the easiest.

Step 5: Document policies, processes, and models

A good program leaves an evidence trail. For each AI system, document the intended purpose, who classified it, the classification reasoning, which controls were approved, which vendor documents were received, and what changed later. Technical documentation for high-risk systems is extensive (similar in scope to a CE marking technical file), so start early and version-control it alongside the system.

Documentation pulls double duty: a legal shield when regulators ask questions, and an increasingly useful marketing asset when enterprise buyers or investors ask.

Step 6: Establish monitoring and reporting

Your inventory will drift. Your vendors will change. Your models will update. Your staff will adopt tools you did not approve. Your product team will add features faster than the policy deck updates. Monitoring is what stops the program from becoming a point-in-time fiction.

Most assurance mechanisms are retrospective. Audits are look-back exercises: they tell you how a system operated months ago, not whether it is operating safely now. Point-in-time evidence is necessary but insufficient.

You need present-tense controls: logging under Article 12, drift detection, version-aware change management, defined incident thresholds, and a monitoring layer that survives prompt changes, model swaps, fine-tunes, and new downstream uses. Serious incidents must be reported to market surveillance authorities within 15 days, and within 2 days for breaches affecting fundamental rights.

Step 7: Prepare for audits and regulatory reviews

Before a regulator cares, your buyers will. You should be able to explain your inventory, your role mapping, your classification decisions, your AI literacy approach, your transparency measures, your governance owners, and how you monitor third-party AI over time.

One underappreciated cost is evidence drag. Audit fatigue starts when engineers are pulled into gathering logs, screenshots, and one-off proof for controls they do not own day to day. The better model is to let your GRC layer self-serve evidence wherever possible and reserve human attention for exceptions, judgment calls, and remediation decisions. That is what makes a program scalable rather than just auditable.

Another forward-looking option worth considering: regulatory sandboxes. The Act establishes a framework for controlled testing environments in which you can develop and validate AI systems under regulatory supervision before full market release. If your use case is novel, you’re in a heavily regulated industry, or you want early regulator clarity, sandboxes are worth applying to. Availability varies by Member State, and the Digital Omnibus may shift sandbox establishment to December 2, 2027.

“Buyers are more likely to ask whether you’re compliant with the EU AI Act than whether you’re ISO 42001 certified, because the Act carries hard law, hard fines, and concrete obligations like quality management, documentation, and logging.”~ Shea Brown, CEO at Babl.ai [An excerpt from Understanding ISO 42001 webinar].

EU AI Act compliance checklist

The seven steps above describe the work; the five-layer checklist below is the artifact you produce as you do it. This checklist is the ready-reference you bring to a stand-up, an audit prep, or a Monday morning when you need to remember what stage you’re at. Treat it as a sanity check, not a substitute for the full work.

Layer 1: Foundation

- AI inventory built: You have a complete inventory of every AI system in use, including features embedded in third-party tools and shadow AI adopted by employees, with each one categorized as built, bought, embedded, or informal.

- Role mapped per system: For each AI system, you have identified whether you are a provider, deployer, importer, distributor, or downstream provider, and you have documented cases where you hold multiple roles separately.

- Risk tier classified: You have classified each system as prohibited, high-risk, limited-risk, or minimal-risk, and for Annex III systems where you are claiming the Article 6(3) escape clause, you have documented the reasoning in writing.

- AI literacy program rolled out: Staff operating AI systems have received training that fits their role and the system’s risk profile, with training records retained.

- Governance owners named: You have a standing committee or working group covering legal, security, product, ML and data, and an executive sponsor, with clear ownership across inventory, classification, vendor reviews, incident reporting, and training.

Layer 2: For high-risk systems

- Risk management system active: You are running the Article 9 risk management process continuously, not as a one-time exercise.

- Data governance documented: Your training, validation, and testing data meets the Article 10 expectations for relevance, representativeness, accuracy, and completeness.

- Technical documentation maintained: Your Article 11 file covers system architecture, training data lineage, testing results, human oversight mechanisms, and known limitations, version-controlled alongside the system.

- Automated logs enabled: Article 12 logging is in place, with retention periods aligned to regulatory expectations.

- Transparency information prepared: Your Article 13 documentation is written for the deployer audience and explains system capabilities and limitations clearly.

- Human oversight built in: Article 14 mechanisms are addressed at the design level, not bolted on after launch.

- Quality management system active: Article 17 QMS is documented and operational across design, development, testing, and deployment.

- Conformity assessment completed: You have completed the assessment, drawn up the EU declaration of conformity, affixed the CE marking, and registered the system in the EU database.

- Post-market monitoring active: Article 72 obligations are operationalized, with incident categories defined and reporting workflows clear.

Layer 3: For high-risk systems

- Provider instructions followed: You are using the system according to the provider’s documentation, since deviations create liability.

- Human oversight assigned: Named individuals have the authority to intervene when the system is operating incorrectly.

- Monitoring and logging set up: Local logging is in place, with logs retained for the period the law requires.

- Workforce notified: Workers are informed when AI is being used to make decisions about them in the workplace.

- FRIA completed: A Fundamental Rights Impact Assessment is documented before deployment where required (public-sector use, creditworthiness assessment, life and health insurance pricing, biometric categorization).

Layer 4: For SaaS specifically

- Chatbot AI disclosure shipped: Disclosure is built into the UI by default, not as an opt-in.

- Deepfake labeling implemented: Labels are in place where applicable.

- AI-generated content marked: Content in matters of public interest is clearly marked.

- Vendor contracts updated: Contracts include AI Act-specific clauses covering provider documentation, declarations of conformity, audit rights, liability allocation, notification of substantial modifications, and GPAI Code of Practice compliance.

- Cross-border transfer mechanism in place: Your AI vendor participates in the EU-US Data Privacy Framework, or you are using Standard Contractual Clauses or Binding Corporate Rules, with any consent-based derogations documented as exceptional.

- GPAI Code of Practice tracked: You are treating the Code as your specification for what to demand from foundation model providers.

Layer 5: Continuous

- Inventory refreshed quarterly: You are reviewing the AI inventory four times a year rather than annually, because new AI tools enter your organization weekly and a yearly review will miss most of them.

- Classifications re-reviewed on change: Risk classifications are revisited when a system changes materially, when Commission guidance is published, or when Digital Omnibus amendments land.

- Documentation kept current: Technical documentation is updated alongside the system, not after the fact.

- Incident log maintained: The log captures sub-reportable events as well as reportable ones, since evidence of diligence is worth as much as the absence of incidents.

- Regulatory developments monitored: You are tracking the Digital Omnibus, the GPAI Code of Practice finalization, the Commission’s high-risk classification guidelines, harmonized standards as they publish, and Member State-level market surveillance authority designations.

- Internal gap audits run: You are running audits at least annually and before any external review, because the cost of finding a gap yourself is a fraction of finding it during a regulator review.

This checklist helps document your state today. It doesn’t, on its own, make you compliant tomorrow. That’s the work of the program. The reason a platform like Sprinto matters here is exactly this: most of these checks are not one-time decisions; they’re continuous states that drift the moment systems change. Layer 5 is where compliance programs quietly fail, and where automation earns its place.

Fines for non-compliance

Let me get the fine tiers out of the way, because they’re important but not the most interesting part.

| Violation | Maximum fine |

| Prohibited practices (Article 5) | €35M or 7% of global annual turnover, whichever is higher |

| Most other violations | €15M or 3% of global annual turnover |

| Supplying incorrect information to regulators | €7.5M or 1% of global annual turnover |

SMEs (small and medium enterprises) get modest relief built into the Act. For smaller businesses, the fine is capped at whichever calculation produces the lower amount, rather than the higher one.

Now, the more interesting questions. Who’s actually responsible for enforcing these:

- National market surveillance authorities (many Member States are late in designating these)

- The AI Office at the European Commission, with exclusive jurisdiction over GPAI model providers, starting August 2, 2026

- National courts, for private actions

- Downstream providers can file complaints against GPAI providers

- A scientific panel can alert the AI Office to systemic risks

Enforcement won’t likely be vigorous at first, as the infrastructure is still being stood up. But this is a wake-up call, not a green light.

The bigger consequences beyond fines:

- Market withdrawal orders: Regulators can order recall and withdrawal of non-compliant AI systems.

- Loss of EU funding eligibility: Under the Apply AI Strategy.

- Procurement screen-outs: By enterprise buyers who’ve baked AI Act due diligence into their vendor processes.

- Investor diligence friction: When PE and VC shops build AI governance into their deal playbooks.

For most SaaS companies, the fines are a distant risk. The procurement and deal-velocity impact may be more immediate.

We’ve seen a significant reduction in the complexity of our customers’ initial procurement of our software. Qualitatively, at least half of the volume of security questions is gone thanks to attestations. So far this year, I’ve only had to do six or eight questionnaires about our security. It was six or eight a month previously!” ~ David Mason, Director of Security, Anaconda

Common challenges in EU AI Act compliance

Most AI compliance problems start as visibility problems.

- AI inventory and visibility: You can’t comply with what you can’t see. Shadow AI, the tools employees adopted without central approval, is the most common gap. The resulting risk is what practitioners call “data hemorrhage”: proprietary logic and customer PII getting pasted into unvetted models, gone forever.

- Multi-framework fatigue. You’re probably also navigating GDPR, NIS2, DORA, Colorado AI Act, Texas TRAIGA, NYC Local Law 144, ISO 42001, NIST AI RMF, and SOC 2. More than 1,000 US state AI bills were proposed in 2025 alone, per Orrick. The practical response most enterprises land on is a baseline-plus-delta approach: identify the common requirements across frameworks, build one program, layer jurisdiction-specific adjustments on top.

- Regulatory flux: Digital Omnibus in November 2025. Parliament’s position in April 2026. Council position in parallel. Trilogue negotiations are now underway. Codes of practice are still being drafted. Standards are still being finalized. The one I keep coming back to: don’t assume you can relax because amendments are coming. Until changes formally pass, the existing rules apply.

- Product development speed versus compliance governance: Most compliance programs assume long review cycles. Most AI products ship in days. The mismatch is where both sides start blaming each other, legal feels ignored, and engineering feels blocked. The fix is designing compliance into the developer’s workflow rather than running it alongside. That means a lightweight intake process for new AI tools (not a six-week review), approval paths that default to fast and clear guidance engineers can apply without needing to read the Act themselves.

- The velocity gap: This one doesn’t get enough attention. US research teams may be able to launch projects in weeks that take comparable EU teams months, sometimes leading to projects being abandoned. The same dynamic applies to SaaS product velocity. The cost of compliance isn’t fines. It’s time to market. Organizations that treat compliance as pre-staged evidence, vendor approvals, and classification templates, built into the dev environment, significantly compress that gap.

The fix across all five is the same: design compliance into the developer’s workflow rather than running it alongside. Lightweight intake processes, fast-default approval paths, and clear guidance that engineers can apply without reading the Act themselves.

How the EU AI Act aligns with other frameworks

Short answer: ISO 42001, NIST AI RMF, GDPR, and SOC 2 do not make you EU AI Act-compliant by themselves. The long answer is that these frameworks help in specific ways.

- ISO/IEC 42001: It is an international standard for AI management systems. It’s certifiable, globally recognized, and useful. But here’s something: ISO 42001 does not give you a presumption of conformity under the EU AI Act. It’s a good governance framework; it’s not a compliance shortcut. The European AI Office has even signaled that an ISO quality-management standard it reviewed didn’t reflect the approach the EU wants.

- NIST AI Risk Management Framework: It is voluntary, US-origin, and globally referenced. Its four core functions, Govern, Map, Measure, and Manage, map reasonably well onto EU AI Act requirements, but at a higher level. It’s excellent scaffolding for the principles layer.

- GDPR: It coexists with the EU AI Act. They don’t supersede each other. Training AI on personal data means both apply. The Digital Omnibus is also amending the GDPR (separately), further complicating matters. You can expect an overlap, not a substitution.

- SOC 2: It has meaningful overlap with AI Act cybersecurity requirements (Article 15). Not sufficient on its own, SOC 2 doesn’t address AI-specific risks like bias or explainability, but if you have SOC 2, you’re not starting from zero.

The approach most external counsel recommends, and most sophisticated enterprises already use, is a baseline-plus-delta architecture. Start with principles common across OECD guidelines, NIST, ISO 42001, and the AI Act. Build a single program around them. Then layer jurisdiction-specific overlays on top.

How Sprinto helps achieve EU AI Act compliance

I’ve held off talking about Sprinto until now, because I wanted to lay out the problem honestly first. Here’s where our platform earns its place, and where it doesn’t.

Sprinto is built around a specific idea: compliance shouldn’t be a reactive, manual project that your team rebuilds every year. It should run continuously, in the background, autonomously. We call this an Autonomous Trust Platform. What that means practically: the platform detects changes in your environment, determines what’s at risk, and acts, across compliance, vendor risk, AI governance, and more.

For EU AI Act compliance specifically, three things matter:

- Shadow AI detection: Every compliance exercise starts with an inventory. Most companies get the inventory wrong because they miss the AI tools employees adopted informally. Shadow AI is now reshaping vendor risk in subtle ways that most TPRM programs aren’t built to catch. Sprinto detects AI tool use across your organization, maintains a live registry, classifies risk by data sensitivity, and maps your AI footprint to ISO 42001, NIST AI RMF, and the EU AI Act.

- Continuous mapping across frameworks: If you’re already running SOC 2, ISO 27001, or HIPAA on Sprinto, the platform auto-maps existing controls, policies, and evidence to new standards, including the AI Act. That’s the baseline-plus-delta architecture made operational. You’re not rebuilding compliance programs. You’re extending one.

- Autonomous agents that act, not just alert: When a control drifts, most tools send you an email and wait. Sprinto’s agents close gaps, refresh evidence, and route approvals; you approve decisions, and the platform handles execution. For a compliance program that has to run continuously across dozens of systems, this changes the landscape.

Where Sprinto doesn’t help: it won’t tell you whether your system is high-risk under Article 6, that’s a legal judgment that needs a lawyer or an internal risk committee. It won’t draft your technical documentation for you from scratch. It won’t negotiate your vendor contracts. It handles the continuous, repeatable parts of compliance, the parts that burn time and introduce drift.

If you are running SOC 2 or ISO 27001 today, you already have most of what the AI Act asks for: visibility, ownership, evidence, and monitoring. The question is whether your current stack can extend cleanly into AI governance, or whether you will rebuild.

See your AI footprint mapped to the EU AI Act.

FAQs

It’s meeting the legal obligations the Act imposes on your business based on your role in the AI value chain (provider, deployer, importer, distributor, downstream provider) and the risk classification of each AI system you build or use. It’s continuous, spanning risk management, documentation, transparency, human oversight, and post-market monitoring.

Yes. The Act has extraterritorial reach. It applies to any business placing AI systems on the EU market, and to any business whose AI output is used in the EU, regardless of where you’re headquartered. If your SaaS serves European customers, you’re likely in scope.

High-risk AI includes systems used in employment, education, credit scoring, law enforcement, migration, critical infrastructure, and AI that functions as a safety component in already-regulated products like medical devices or machinery. Annex III lists the use cases; Annex I covers products under existing EU harmonized legislation. Article 6(3) allows some systems in these contexts to qualify as not-high-risk if their role is narrow enough.

Phased. Since February 2, 2025: prohibited practices and AI literacy. Since August 2, 2025: GPAI model obligations. August 2, 2026: high-risk AI provisions, though the Digital Omnibus proposes delaying this to December 2, 2027. August 2, 2027: provisions for AI in products under existing EU harmonized law, potentially shifting to August 2, 2028. The proposed delays are not yet law. The original dates apply until amendments pass.

Map your role per product feature (are you a provider, a deployer, or a downstream provider for third-party GPAI models?). Inventory every AI feature. Classify each by risk tier. Plan for Article 50 transparency obligations becoming applicable on August 2, 2026. Update vendor contracts with AI Act clauses. Track the GPAI Code of Practice for what to expect from your model providers. Roll out AI literacy training. And centralize the tracking in one place; a compliance platform works well for this.

Up to €35M or 7% of global annual turnover for prohibited practices. €15M or 3% for most other violations. €7.5M or 1% for supplying incorrect information. Beyond fines: market withdrawal orders, loss of eligibility for EU funding, procurement screen-outs, and investor diligence friction.

Author

Sucheth

Sucheth is a Content Marketer at Sprinto. He focuses on simplifying topics around compliance, risk, and governance to help companies build stronger, more resilient security programs.Explore more

research & insights curated to help you earn a seat at the table.