TL;DR

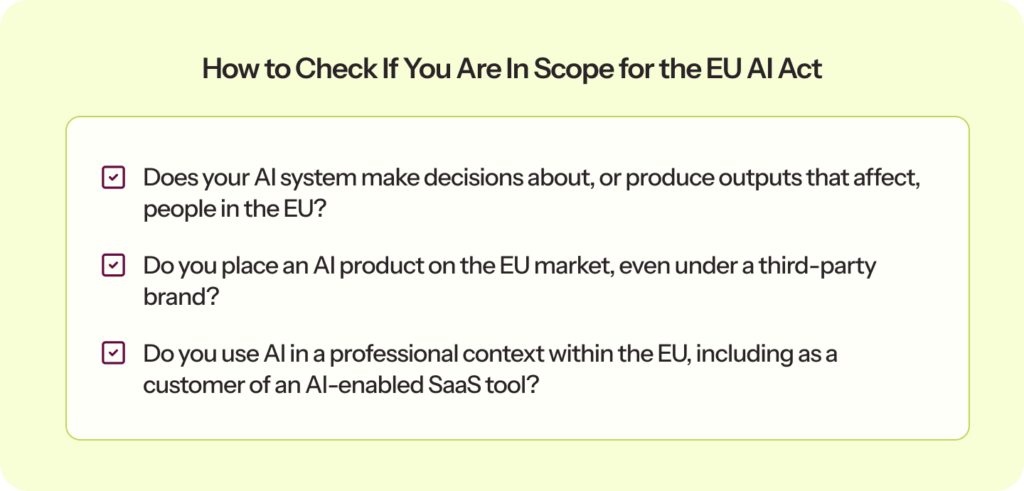

| The EU AI Act applies to your organization if you store or manage EU citizen data, work with vendors who do, or deploy AI systems whose outputs affect people in the EU, regardless of where you are headquartered. Your system’s reach into EU markets, not your company’s address, is what puts you in scope. |

| Your obligations depend on two things: your system’s risk classification (prohibited, high-risk, limited, or minimal) and your role (provider, deployer, importer, or distributor). Get any of them wrong, and your entire compliance program is built on the faulty foundation. |

| The four gaps that sink otherwise solid compliance programs are shadow AI in the inventory, incomplete Annex IV documentation, treating readiness as a one-time project, and a lack of process to track regulatory updates. |

| If you already have SOC 2, ISO 27001, or ISO 42001, you are not starting from scratch; 40 to 50% of what the Act requires maps to controls you likely already have. |

If you are building, selling, or deploying AI that operates in, sells into, or affects EU markets, you already know the EU AI Act is in force. What most businesses are still figuring out is whether they are actually ready for it, what specific documents and controls they need, and how to prepare for scrutiny from regulators or enterprise customers before the August 2026 enforcement deadline hits.

I’ve built this checklist for organizations that are somewhere in the middle: aware, but not yet ready. Whether you are a provider building AI systems, a deployer using them in your product or operations, or a SaaS company that is functionally both, it covers every obligation in the order you should tackle them.

Does the EU AI Act apply to you?

Yes, most likely, even if your company is not based in the EU. The Act applies to any organization whose AI systems are used in the EU or whose outputs affect EU residents, regardless of where you are headquartered. A US SaaS company selling an AI hiring tool to a German enterprise is in scope. A Singapore fintech whose credit model processes French customer applications is in scope. Your company’s location does not determine applicability. Your AI system’s reach does.

EU AI Act vs ISO 42001: Do you need both?

In the last three months alone, our in-house experts have spoken to over 700 organizations, and this is one of the first questions that comes up in almost every conversation.

The short answer: ISO 42001 gives you the governance management system. The EU AI Act is the law. They are complementary, not interchangeable.

ISO/IEC 42001 is a voluntary international standard for AI management systems. It gives your organization a certifiable framework for governing AI responsibly. Getting certified demonstrates to customers and regulators that your AI practices are structured and audited. It is sector-agnostic and applies globally.

The EU AI Act is binding legislation with heavy fines for non-compliance, depending on the violation. It imposes requirements that ISO 42001 does not cover: six-month log retention, specific Annex IV technical documentation, conformity assessments, CE marking, and EU database registration for high-risk systems.

The most practical way to think about it: ISO 42001 asks, “Do you govern AI responsibly?” The EU AI Act asks, “Is this specific system legally compliant?” There is roughly 40-50% overlap between them in areas such as risk management, data governance, transparency, and human oversight. Organizations with existing ISO 42001 certification can accelerate their EU AI Act compliance work by roughly 30-40%, but must still close specific gaps in conformity assessments, EU database registration, and post-market surveillance.

The EU AI Act compliance checklist

Before you dive in, build your AI inventory. List every AI system your organization builds, uses, or procures, including tools employees use informally. Everything in this checklist depends on knowing what you actually have.

| Already have SOC 2, ISO 27001, or ISO 42001 in place? You are not starting from scratch. See how Sprinto maps your existing compliance work to EU AI Act requirements automatically |

1. Understand your risk level

Your obligations under the EU AI Act flow entirely from this classification. Get it wrong and everything else is built on a shaky foundation.

- Prohibited (banned since February 2025): Social scoring, subliminal manipulation, predictive policing based solely on profiling, and real-time biometric identification in public spaces. If any system falls here, it needs to come down now.

- High Risk (enforcement August 2026): AI in eight Annex III categories: biometrics, critical infrastructure, education, employment, essential services (credit, insurance), law enforcement, migration, and administration of justice. Full compliance obligations apply.

- Limited Risk: Transparency obligations only. Chatbots must disclose that they are AI. AI-generated content must be labeled.

- Minimal Risk: No mandatory obligations. Most productivity tools, spam filters, and recommendation engines fall into this category.

Once you have classified risk across your own systems, extend the same exercise to your third-party vendors.

- Extend risk classification to all AI-enabled vendors and LLM providers.

2. Know your role and meet your obligations

Your role under the Act, whether you are a provider, deployer, importer, or distributor, determines what you are specifically required to do.

| 💡Not sure which role applies to you? Provider: You build or develop the AI system. Deployer: You use a third-party AI system in your operations. Importer: You bring an AI system into the EU market from outside the EU. Distributor: You resell or make an AI system available without modifying it. |

For providers of high-risk AI systems:

- Establish a risk management system covering the full AI lifecycle.

- Prepare Annex IV technical documentation before placing the system on the market.

- Build human oversight into the system so a human can monitor, intervene, and override.

- Complete a conformity assessment, sign an EU Declaration of Conformity, and affix CE marking.

- Register the system in the EU high-risk AI database. (EU AI Act Article 71, Regulation 2024/1689)

For deployers of high-risk AI systems:

- Verify that the system is registered in the EU high-risk AI database before use.

- Conduct a Fundamental Rights Impact Assessment where required.

- Assign human oversight to staff with the competence and authority to intervene.

- Inform affected individuals that an AI system is involved in decisions about them.

For all organizations:

- Implement AI literacy training for all staff who interact professionally with AI. This has been a legal requirement since February 2025. (EU AI Act Article 4)

3. Get your documentation and management systems in order

Documentation is what you hand a regulator or enterprise customer when they ask for proof. It is also the area where most businesses have the biggest gaps.

If you already have ISO 27001, you have a head start. Your Information Security Management System (ISMS) covers information security risk management, access controls, and data integrity, all of which the EU AI Act requires for high-risk systems. Extend your existing ISMS scope to explicitly cover AI systems and document how those controls map to Act requirements. Sprinto’s ISO 27001 guide can help you build or expand your ISMS.

If you already have ISO 42001, you are further ahead. Your AI management system already addresses risk governance, documentation practices, and oversight structures. You still need to close specific gaps: Annex IV technical documentation, conformity assessments, CE marking, and EU database registration. But the governance backbone is there. See how ISO 42001 maps to the EU AI Act.

If you are starting fresh:

- Prepare Annex IV technical documentation for every high-risk system: design, training methodology, performance metrics, testing results, and known limitations.

- Retain operational logs for at least six months.

- Keep a signed EU Declaration of Conformity on file for every high-risk system.

- Establish an AI management system (AIMS). ISO 42001 is the internationally recognized standard for this and the most efficient foundation for EU AI Act compliance.

4. Revisit your data governance practices

The Act cares about what data goes into your AI systems, how it is handled, and whether the people it affects have meaningful transparency.

- Document training, validation, and testing datasets as relevant, representative, and error-free.

- Examine datasets for biases that could affect fundamental rights.

- Put formal Data Processing Agreements in place with AI vendors, explicitly prohibiting the use of your data for model training.

- Identify where customer data is used in AI training, and give customers a meaningful opt-out option.

For financial institutions, data governance under the EU AI Act overlaps significantly with DORA obligations around ICT risk management and third-party oversight. If you are already DORA compliant, map your existing vendor risk controls and operational resilience documentation to your EU AI Act data governance requirements rather than building them separately. Read more about DORA compliance.

5. Build in transparency

Transparency is both a specific legal obligation for several AI use cases and an increasingly strong signal to enterprise buyers.

- Where a system influences decisions about individuals, inform those individuals that AI is involved.

- Ensure chatbots and AI-generated outputs clearly identify themselves as AI.

- Make product documentation clear on capabilities, limitations, and potential risks.

- Set up a way to communicate your compliance posture to customers. Sprinto’s Trust Center lets you share compliance credentials publicly or in gated reviews, cutting down repetitive security questionnaires from enterprise buyers.

6. Make compliance continuous

The EU AI Act does not have a finish line. Guidelines are still being finalized. Delegated acts are in progress. Each significant update to an AI system can trigger a new conformity obligation. Point-in-time compliance will be out of date faster than most teams realize.

- Do you have automated monitoring in place to detect when controls drift or evidence goes stale?

- Is there a named person responsible for tracking EU AI Act updates and translating them into program changes?

- Are risk assessments scheduled for review when AI systems are significantly updated or when regulations change?

Have you mapped your existing compliance frameworks to EU AI Act requirements to avoid duplicating effort? Sprinto’s Infinite Frameworks engine does this mapping automatically, so adding a new framework does not mean starting over.

Common gaps organizations miss when using an EU AI Act checklist

Even organizations with a mature compliance program tend to miss the same things. Here are the four most common gaps worth checking before you consider your program solid.

- Shadow AI is not in the inventory: Your checklist is only as complete as your AI inventory. If employees are using AI tools that were never formally approved or cataloged, those systems are outside your risk classification, uncovered by your policies, and invisible to your compliance function. When a regulator asks for a complete list of AI systems in use, that gap is immediately visible. The fix is active discovery, not blanket bans.

- Technical documentation is incomplete: Annex IV documentation is the most commonly cited gap in EU AI Act readiness assessments. Many organizations have AI systems in production with no architecture descriptions, no documentation of training data, and no performance benchmarks. Systems that were built before the Act was on anyone’s radar were not designed with this in mind. Retrofitting documentation is significantly harder than building it into your development process from the start. If you have high-risk systems in production today with incomplete documentation, this is your most urgent gap.

- Readiness is treated as a one-time project: The EU AI Act lacks a finish line. The Commission is actively issuing guidelines and delegated acts. The AI Office is publishing and revising GPAI codes of practice. National enforcement authorities are operationalizing their penalty frameworks. Each significant modification to an AI system can trigger a new conformity obligation. Compliance programs built as static documents become outdated faster than most teams realize.

- There is no process for monitoring regulatory updates: The Commission missed its own February 2026 deadline for publishing practical implementation guidelines on high-risk classification. Guidelines are still being finalized. If no one in your organization is actively tracking these developments and translating them into compliance program updates, you are operating on requirements that may already be superseded.

How Sprinto helps you get EU AI Act audit-ready faster

This checklist is a starting point, not a system. Working through it tells you where you stand; it doesn’t keep you there.

That’s the gap Sprinto is built for.

Sprinto is the world’s first Autonomous Trust Platform for compliance that runs continuously in your background. The platform detects changes in your environment, determines what’s at risk, and acts across compliance, vendor risk, AI governance, and more. Here’s how Sprinto helps:

- Shadow AI detection and live AI registry: Sprinto detects AI tool adoption across your organization, maintains a continuously updated registry, and maps your full AI footprint to the EU AI Act, ISO 42001, and the NIST AI RMF.

- Framework alignment without manual mapping: Sprinto’s Infinite Frameworks engine continuously monitors regulatory updates, identifies which apply to your organization, and automatically maps changes to your existing controls. Gap assessments that used to take months now take days.

- Continuous evidence collection: Evidence for your audit checklist is collected and validated in the background. When a regulator or enterprise customer asks for your compliance documentation, it is already assembled.

- Vendor risk management for AI-embedded tools: Your governance perimeter does not stop at your own systems. Sprinto continuously monitors third-party vendors, including SaaS tools with embedded AI, flagging changes in their security posture, data handling practices, and compliance status. If a vendor quietly adds an AI feature that touches your customer data, Sprinto catches it before your risk team does.

- ISO 42001 and EU AI Act, together: If you are pursuing ISO 42001 alongside EU AI Act compliance, Sprinto supports both from the same platform, so you capture the 40 to 50% framework overlap without building duplicate programs.

Don’t let the deadline sneak up on you. Book a Sprinto demo and find out how quickly you can get audit-ready.

Author

Radhika Sarraf

Radhika Sarraf is a content marketer at Sprinto, where she explores the world of cybersecurity and compliance through storytelling and strategy. With a background in B2B SaaS, she thrives on turning intricate concepts into content that educates, engages, and inspires. When she’s not decoding the nuances of GRC, you’ll likely find her experimenting in the kitchen, planning her next travel adventure, or discovering hidden gems in a new city.Explore more

research & insights curated to help you earn a seat at the table.