If you’re part of a GRC team in a 1,000+ employee organization, there’s a high chance that Vendor Risk no longer feels manageable. This is because traditional vendor management was built around centralized adoption, control, and compliance, while today’s vendor ecosystem is defined by constant change, deep interconnectivity, and decentralized adoption.

Vendors update their products weekly, AI and other integrations are spun up without central oversight, and AI tools are introduced into workflows faster than governance teams can evaluate them. In this environment, the question is no longer whether a vendor passed an assessment. The real question is: What is your current exposure to that vendor?

Can you still trust them?

Most Vendor Risk stacks cannot answer that question. At least not for vendors in rapidly evolving categories.

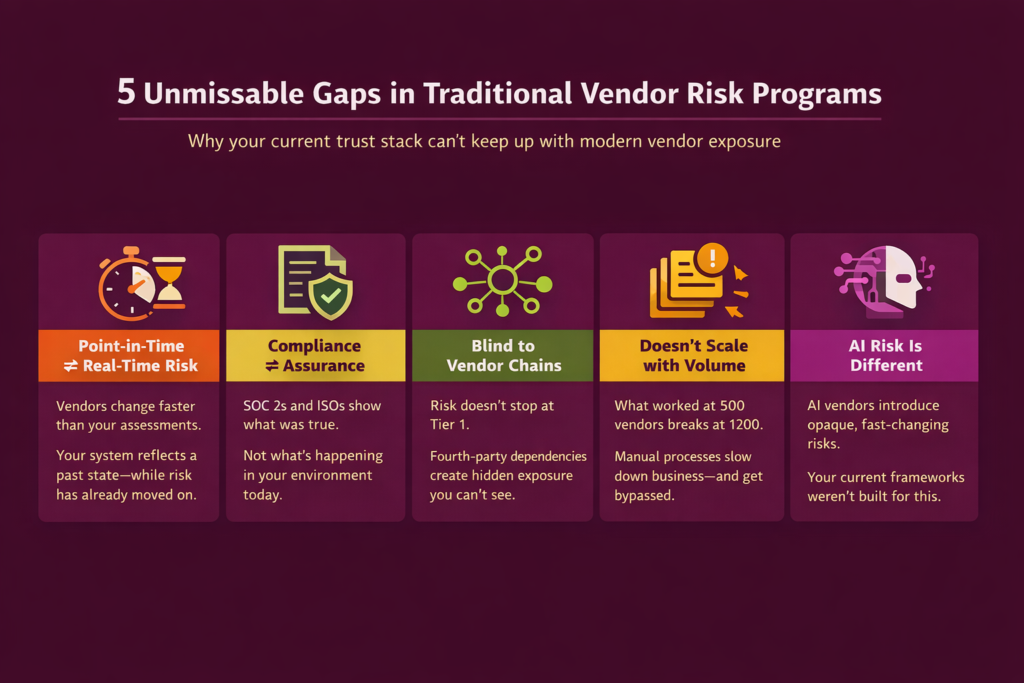

5 unmissable gaps in the traditional Vendor Risk approach

A big reason Vendor Risk programs are struggling today is that they are still rooted in point-in-time assessments. Security questionnaires, annual reviews, and periodic audits were sufficient when Vendor Risk moved slowly. Today, they tend to create a false sense of assurance.

Here’s why:

Reason #1: AI vendors introduce a fundamentally different risk model

The rise of AI vendors introduces an entirely new dimension of complexity. Unlike traditional SaaS vendors, AI tools pose unique risks related to data leakage, model behavior, and opaque processing layers. Inputs may be retained or reused in ways that are not fully transparent, model outputs can be unpredictable, and dependencies often extend to underlying model providers that are not directly visible. Existing frameworks and questionnaires are not equipped to assess these nuances.

What this means: As a result, organizations are forced to make binary decisions—either block AI adoption entirely or move forward with limited understanding of the risks involved. Neither approach is sustainable in the long term.

Reason #2: Point-in-time assessments in a real-time risk environment

A vendor that was “approved” three months ago may have since introduced new subprocessors, changed its infrastructure, or experienced an incident that hasn’t yet surfaced in formal reports. Because the next report, stemming from the next periodic review, isn’t due yet. Plus, increasingly, vendors also roll out AI features post-approval, introducing new data flows, model dependencies, and training practices that were never part of the original evaluation, or things may have simply changed between reviews. Models can change frequently, inputs may be retained or reused, and the way data is processed or learned from can evolve without explicit visibility.

What this means: Your system still reflects a past state, while your actual risk posture has already shifted. This lag between assessment and reality is one of the most critical gaps in Vendor Risk programs in 2026.

Reason #3: Compliance artifacts mistaken for real assurance

The second reason your trust stack needs to evolve is an over-reliance on compliance artifacts that act as proxies for trust. SOC 2 reports, ISO certifications, and policy documents continue to play an important role, but they do not provide real-time assurance. They confirm that controls existed and were tested at a specific point in time, not that those controls are functioning effectively today as per current usage behavior.

What this means: As vendor environments become more dynamic, the gap between evidence and actual assurance continues to widen. GRC teams often find themselves “covered on paper” but exposed in practice.

Reason #4: No visibility beyond Tier 1 vendors

Another structural limitation is the lack of visibility beyond direct vendors. Most trust stacks are built to assess Tier 1 relationships, but risk does not stop there. Your vendors depend on their own vendors, like cloud providers, AI model providers, subprocessors, and open-source components.

What this means: These fourth-party dependencies can introduce concentration risks and cascading failure scenarios that are largely invisible to traditional systems. When an incident occurs deep in the supply chain, its impact is felt across your organization, even if you never had direct visibility into that layer. Without a supply-chain-aware view of vendor relationships, your understanding of risk remains incomplete.

Reason #5: Processes that don’t scale with vendor volume

Scale further exposes these cracks. Processes that work for 500 vendors begin to break down at 1200. Questionnaires pile up, reviews slow down, exceptions become harder to track, and ownership gets fragmented. GRC teams, instead of enabling the business, become bottlenecks that teams try to work around.

What this means: This often leads to the growth of shadow IT, where tools are adopted without formal onboarding or review. By the time a vendor enters the official process, data has already been shared, integrations are already live, and risk is already present. The trust stack, in these cases, is not preventing exposure—it is merely documenting it after the fact.

| The uncomfortable truth is that many GRC teams are operating with systems that were never designed for the current landscape. You are being asked to manage dynamic, evolving risk using static, point-in-time tools. |

What does an ideal trust OS that addresses dynamic Vendor Risk look like?

A modern trust Operating System moves from periodic reviews to continuous visibility. But that’s not all: signals about vendor posture are not only monitored in real time; meaningful changes also trigger action.

A trust OS that materially addresses constantly evolving Vendor Risk, incorporates context. It evaluates risk based on how a vendor is actually used within your organization: what data it touches, what systems it integrates with, and what impact a failure would have.

It is also network-aware, mapping dependencies across vendor chains to surface hidden risks and potential points of failure.

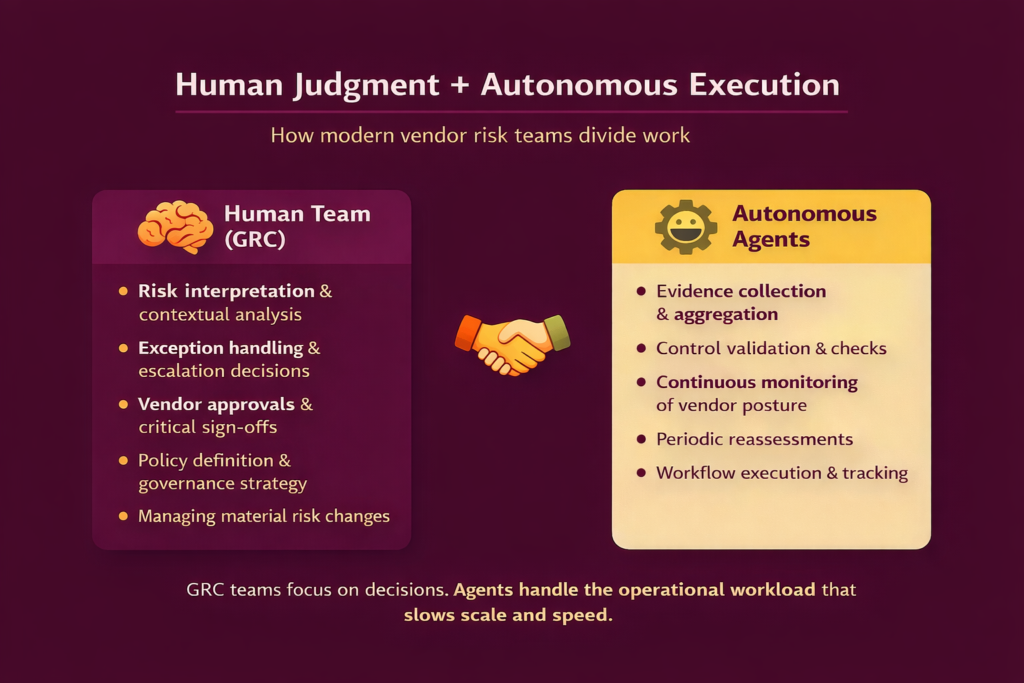

If all of that sounds impossible its because you’re trying to match AI speed levels with manual mechanisms. To keep up with an AI-fuelled rate, you will need AI-fuelled workflows, or for some amount of the workload to be picked up by autonomous agents.

Parts of the workflow that can be safely offloaded to autonomous agents include evidence collection, control validation, and continuous reassessments. Human intervention, on the other hand, should be reserved for situations that actually require context, such as exceptions, material risk changes, and critical approval decisions.

In practice, this means GRC teams move from chasing evidence to making decisions. Agents take on the operational burden. They pick up what historically burned teams out, slowed execution, and made it nearly impossible to scale Vendor Risk programs and keep up with the rate of change in today’s AI-driven environment.

Reason #1: AI vendors introduce a fundamentally different risk model

The rise of AI vendors introduces an entirely new dimension of complexity. Unlike traditional SaaS vendors, AI tools pose unique risks related to data leakage, model behavior, and opaque processing layers. Inputs may be retained or reused in ways that are not fully transparent, model outputs can be unpredictable, and dependencies often extend to underlying model providers that are not directly visible. Existing frameworks and questionnaires are not equipped to assess these nuances.

What this means: As a result, organizations are forced to make binary decisions—either block AI adoption entirely or move forward with limited understanding of the risks involved. Neither approach is sustainable in the long term.

All the reasons above underscore a deeper issue: most trust stacks are compliance-led rather than exposure-led. They are designed to answer questions like “Are we compliant?” or “Do we have the necessary documentation?” rather than “Where are we exposed right now?” and “How is that exposure changing over time?” This distinction matters. In a dynamic environment, you need to continuously observe, understand, and respond to evolving risks.

Reason #2: Point-in-time assessments in a real-time risk environment

A vendor that was “approved” three months ago may have since introduced new subprocessors, changed its infrastructure, or experienced an incident that hasn’t yet surfaced in formal reports. Because the next report, stemming from the next periodic review, isn’t due yet. Plus, increasingly, vendors also roll out AI features post-approval, introducing new data flows, model dependencies, and training practices that were never part of the original evaluation, or things may have simply changed between reviews. Models can change frequently, inputs may be retained or reused, and the way data is processed or learned from can evolve without explicit visibility.

What this means: Your system still reflects a past state, while your actual risk posture has already shifted. This lag between assessment and reality is one of the most critical gaps in Vendor Risk programs in 2026.

Reason #3: Compliance artifacts mistaken for real assurance

The second reason your trust stack needs to evolve is an over-reliance on compliance artifacts that act as proxies for trust. SOC 2 reports, ISO certifications, and policy documents continue to play an important role, but they do not provide real-time assurance. They confirm that controls existed and were tested at a specific point in time, not that those controls are functioning effectively today as per current usage behavior.

What this means: As vendor environments become more dynamic, the gap between evidence and actual assurance continues to widen. GRC teams often find themselves “covered on paper” but exposed in practice.

Reason #4: No visibility beyond Tier 1 vendors

Another structural limitation is the lack of visibility beyond direct vendors. Most trust stacks are built to assess Tier 1 relationships, but risk does not stop there. Your vendors depend on their own vendors, like cloud providers, AI model providers, subprocessors, and open-source components.

What this means: These fourth-party dependencies can introduce concentration risks and cascading failure scenarios that are largely invisible to traditional systems. When an incident occurs deep in the supply chain, its impact is felt across your organization, even if you never had direct visibility into that layer. Without a supply-chain-aware view of vendor relationships, your understanding of risk remains incomplete.

Reason #5: Processes that don’t scale with vendor volume

Scale further exposes these cracks. Processes that work for 500 vendors begin to break down at 1200. Questionnaires pile up, reviews slow down, exceptions become harder to track, and ownership gets fragmented. GRC teams, instead of enabling the business, become bottlenecks that teams try to work around.

What this means: This often leads to the growth of shadow IT, where tools are adopted without formal onboarding or review. By the time a vendor enters the official process, data has already been shared, integrations are already live, and risk is already present. The trust stack, in these cases, is not preventing exposure—it is merely documenting it after the fact.

All the reasons above underscore a deeper issue: most trust stacks are compliance-led rather than exposure-led. They are designed to answer questions like “Are we compliant?” or “Do we have the necessary documentation?” rather than “Where are we exposed right now?” and “How is that exposure changing over time?” This distinction matters. In a dynamic environment, you need to continuously observe, understand, and respond to evolving risks.

Takeaway: Support human effort with autonomous systems

The real question is whether your current trust stack allows you to see, understand, and respond to vendor exposure as it evolves.

If it does not, it is no surprise that it isn’t ready to mitigate today’s Vendor Risk. It is no longer aligned with the reality it is meant to govern. Catching up means shifting to an autonomous trust system that handles execution at scale, while human effort is reserved for high-value work—interpreting risk, managing exceptions, and making informed decisions.

This is where an autonomous approach to TPRM changes the model.

Instead of relying on static workflows and renewal-driven reviews, vendors are continuously discovered, risk-scored, and assessed as their posture changes. Reassessments are no longer tied to annual cycles—they are triggered by real signals, real changes, and real exposure.

At Sprinto, this is what our autonomous TPRM layer is designed to do: ensure no vendor goes undiscovered, and that every vendor is continuously monitored, evaluated, and kept current, without adding to the operational burden on GRC teams.

The result: a Vendor Risk program that keeps up with change.

Author

Raynah

Raynah is a content strategist at Sprinto, where she crafts stories that simplify compliance for modern businesses. Over the past two years, she’s worked across formats and functions to make security and compliance feel a little less complicated and a little more business-aligned.Explore more

research & insights curated to help you earn a seat at the table.