TL;DR

| Enterprise AI Governance is the system of policies, controls, and accountability structures that lets large organizations use AI responsibly, at scale, without grinding innovation to a halt. |

| At enterprise scale, governance is far more complex than compliance. You are managing hundreds of AI systems, dozens of vendors, multiple geographies, and a regulatory landscape that is rewriting itself in real time. |

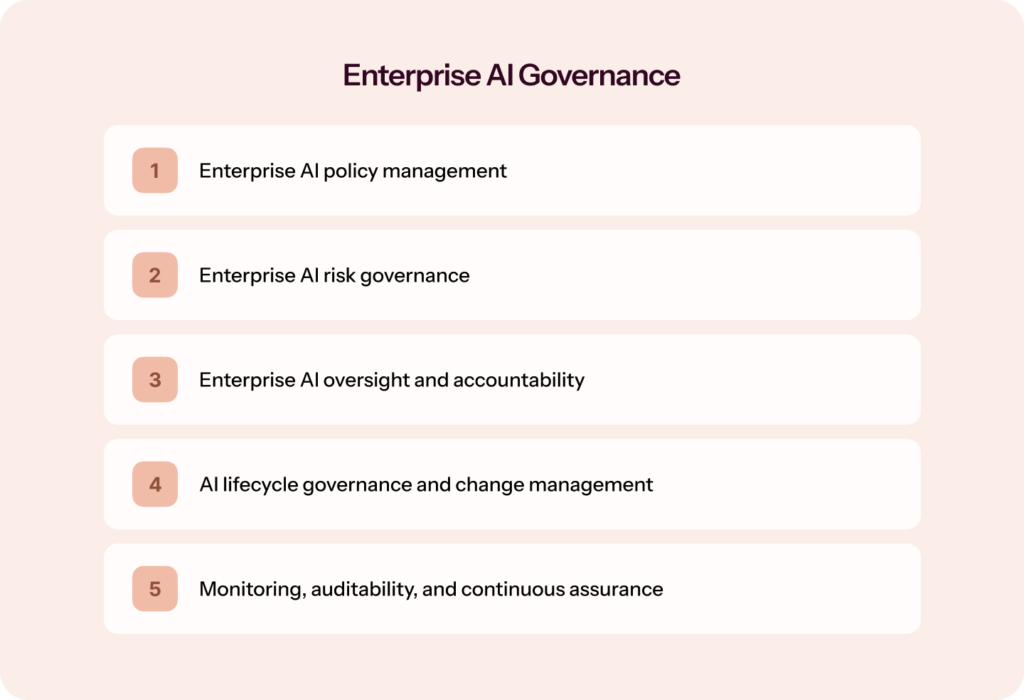

| A mature governance framework covers five pillars: policy management, risk governance, oversight and accountability, AI lifecycle management, and continuous monitoring. |

| With the EU AI Act enforcing fines of up to 7% of global turnover and shadow AI quietly spreading across every business unit, governance is no longer a nice-to-have. It is a board-level strategic requirement. |

If you’re reading this, you already know AI is deeply embedded in your organization — and probably in every vendor your organization depends on. The question you’re sitting with isn’t whether to govern it. It’s how to do it before something slips out of control.

In this blog, you will learn what enterprise AI Governance actually looks like in practice: the frameworks, policies, risk structures, oversight models, and a step-by-step approach to building one that scales.

Why enterprise AI Governance is not optional anymore

Enterprise AI Governance is no longer optional because the cost of not having it is now measurable in regulatory fines, financial losses, and board-level reputational risk. Organizations that govern AI from the start spend significantly less on remediation than those that react after something goes wrong.

Most enterprises understand, at some level, that they need AI Governance. What often gets underestimated is the urgency and the scale of the consequences of not having it.

AI adoption has accelerated faster than governance structures have been built to contain it. According to the IAPP’s 2025 AI Governance Profession Report, 77% of organizations are actively developing AI Governance programs, with 47% ranking it among their top five strategic priorities. But developing a program and having one that works are very different things.

According to Sprinto’s CISO Pulse Check Report 2026, nearly 30% of security leaders say they feel less prepared to handle AI-related risks than other areas of security, and two out of three organizations take longer than a week to implement controls or policy changes after identifying an AI-related risk.

That gap matters enormously right now, for four connected reasons.

- Generative AI, copilots, and autonomous agents have changed what needs governing: Early AI Governance conversations were largely about predictive models used in narrow applications. Today, your employees have generative AI embedded in every productivity tool they use, and agentic AI systems that can take autonomous actions, browsing the web, sending emails, executing transactions, without explicit human instruction at each step. These systems introduce governance challenges that traditional IT controls were never designed for.

- The business risk of ungoverned AI is concrete and significant: Survey data from 2024 shows that 99% of organizations have experienced financial losses from AI-related risks, with average losses of $4.4 million per company. The most commonly cited causes are non-compliance with regulations (57%) and biased outputs (53%). Beyond direct costs, organizations that deploy AI without governance structures spend an average of 2.5 times more on retroactive compliance and remediation than those that govern from the start.

- The regulatory environment has fundamentally shifted: The EU AI Act, adopted in 2024 and phasing in through 2026, is the world’s first comprehensive AI regulation. It introduces a risk-based classification system with fines of up to €35 million or 7% of global annual turnover for non-compliance. US state laws are proliferating fast. Colorado, Illinois, New York City, and California have all introduced AI-specific requirements. ISO/IEC 42001, the international standard for AI management systems, is becoming the benchmark against which enterprises are audited. According to a 2025 survey, 76% of organizations plan to adopt ISO 42001 as their AI Governance backbone.

- Governance is now a board-level concer: AI risk disclosure has surged among major companies. 72% of S&P 500 companies disclosed at least one material AI risk in their 2025 annual filings, up from just 12% in 2023. Reputational risk, driven by bias, hallucination, privacy lapses, and failed implementations, is now the top AI concern among S&P 500 boards. Governance is the answer boards are demanding.

The five core pillars of AI Governance for enterprises

Think of enterprise AI Governance as a building. The five pillars below are the structural columns. Remove any one of them, and the whole thing becomes unstable.

- Enterprise AI policy management defines the rules: what is permitted, what is prohibited, what requires approval, and how exceptions are handled.

- Enterprise AI risk governance translates abstract risk into operational practice: identifying, classifying, and reviewing risks across every AI system in the organization.

- Enterprise AI oversight and accountability defines the organizational structure through which governance is actually exercised. Who is responsible? Who can intervene? How do decisions get escalated?

- AI lifecycle governance and change management ensures governance applies not just at deployment, but across the full AI system lifecycle: design, development, deployment, monitoring, and retirement.

- 5. Monitoring, auditability, and continuous assurance keeps governance alive between formal reviews. Real-time performance tracking, model drift detection, audit trails, and the mechanisms that let governance adapt to incidents and regulatory change.

Enterprise AI policy management: Turning principles into rules

Policies are where governance stops being theoretical. Without clear, enforced policies, even the most sophisticated governance structures produce only paper compliance. Here is what your enterprise AI policy stack needs to cover.

1. Data use, privacy, and intellectual property

AI systems are data-hungry. Your policies need to define what data can be used to train models, what can be entered into AI systems at inference time, and how outputs containing derived insights are handled. You need to explicitly address personal data protection: AI tools used by employees must not transmit personal customer or employee data to third-party model providers in a way that violates the GDPR, CCPA, or applicable privacy laws.

And you need to address proprietary data: employees using external AI tools may unknowingly input confidential strategy documents, financial projections, or unreleased product plans into systems whose terms allow training on user inputs. Policy has to draw that line clearly.

2. Model usage policies for internal and customer-facing AI

Not all your AI systems carry the same risk. An AI tool that suggests email subject lines poses fundamentally different risks than one that screens job applicants or decides credit eligibility. Your policy framework needs to distinguish:

- Internal productivity AI (copilots, coding assistants, summarization tools): lower risk, but still requiring data handling guardrails and acceptable use definitions.

- Customer-facing AI (chatbots, recommendation engines): higher risk given external-facing consequences. Policies should address disclosure requirements, escalation paths, and accuracy standards.

- High-stakes decision AI (HR screening, credit scoring, medical triage): highest risk. Policies should mandate human review requirements, bias audit schedules, explainability standards, and documentation obligations.

3. Third-party AI procurement and vendor policy requirements

A substantial share of enterprise AI risk comes not from systems you build, but from systems you buy. Before any third-party AI tool is approved, your governance framework should require evidence of the vendor’s AI Governance practices, contractual clarity on data handling and training data exclusions, access to relevant certifications (e.g., SOC 2, ISO 42001), and incident notification provisions covering AI system failures or data exposures. Vendor AI risk should also be tracked on an ongoing basis, not just at the point of procurement.

4. Policy exceptions, approvals, and version control

Policies that have no exception path will be worked around. Build a structured exception process: how teams request exceptions, who has authority to approve them, how exceptions are tracked, and periodically reviewed. And treat your AI policies as living documents. They need version control, change logs, effective dates, and a defined review cadence to stay current with regulations, capabilities, and the organization’s risk appetite.

| 💡How does enterprise AI Governance differ from AI Compliance and AI Risk Management? Enterprise AI Governance is not the same as AI compliance, which concerns meeting specific external legal requirements, nor is it the same as AI risk management, which focuses on identifying and mitigating specific threats. AI Governance is the broader operating system that makes both possible. It defines who owns what decisions, how policies are created and enforced, how risks are escalated, and how accountability is maintained across the full AI lifecycle. |

Enterprise AI risk governance: From risk identification to active management

Most enterprises are sitting on AI risk they cannot fully see. Systems are being deployed faster than risk teams can assess them, and the risks they introduce are unlike anything traditional IT frameworks were built to handle. Here is how to govern AI risk in a practical, proportionate, and forward-looking way.

Identify what can break in your AI systems

The risk landscape for enterprise AI falls into five categories, each requiring a different response.

- Your AI can be attacked. Threat actors can corrupt training data, manipulate inputs, or probe models until they reverse-engineer how they work. Unlike a typical breach, these attacks often go undetected because the system keeps running, just not the way you intended.

- Your AI can leak sensitive information. When employees use external AI tools, they routinely input more than they realize: customer data, financial projections, legal documents. Depending on the vendor’s data handling terms, that information may be retained or used for model training. Privacy violations from AI rarely look like a traditional breach. They happen quietly, at scale, through normal everyday use.

- Your AI can be biased, and that bias has real consequences. AI systems learn from historical data. If that data reflects past discrimination in hiring, lending, or healthcare, the model will reproduce and often amplify it. Several US states now require bias audits before deploying AI in employment or credit decisions, and the cost of skipping that step has gone from theoretical to documented in courtrooms.

- Your AI can be confidently wrong. Generative AI does not flag uncertainty the way a human expert would. It produces polished, well-formatted outputs regardless of accuracy. When AI is drafting contracts, summarizing legal precedent, or supporting clinical decisions, a confident hallucination is a liability, not a minor inconvenience.

- Your AI can quietly stop performing. Model performance degrades over time as real-world conditions shift away from those on which the model was trained. Without active monitoring, no one notices until something goes wrong downstream.

Classify your AI systems by risk level

Not every AI system deserves the same level of scrutiny. Governing everything at the same intensity leads to governance paralysis. The goal is proportionate control, and a four-tier classification system modeled on the EU AI Act works well for most enterprises:

- Prohibited: Applications you will not deploy under any circumstances, regardless of business case. Explicit, values-level decisions.

- High-risk: AI that makes or meaningfully influences consequential decisions about people: hiring, credit, healthcare, access to services. Requires human review, bias testing, audit trails, and regular independent assessment.

- Moderate-risk: AI handling meaningful operational tasks without directly affecting individuals in high-stakes ways. Needs clear policies and periodic review, not maximum overhead.

- Low-risk: Internal productivity tools with limited data exposure and no consequential decision-making function. Govern with acceptable use policies and basic data handling rules.

Build your AI inventory first

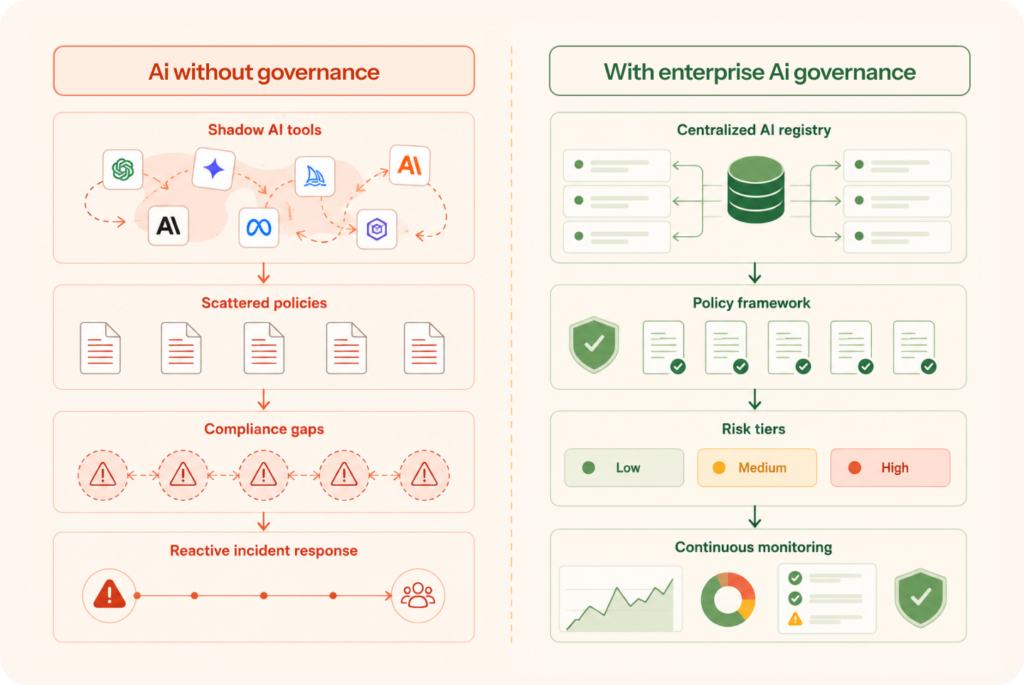

You cannot classify what you do not know you have. An estimated 60% of organizations have employees using AI tools that were never formally approved or tracked. That means a substantial share of your AI risk surface is currently invisible to your governance team.

The AI inventory is a continuously updated catalog of every AI system in use across the organization: who owns it, what data it touches, what decisions it influences, and its risk tier. Everything else in your governance program depends on it. If you can only do one thing to improve your AI risk governance today, build the inventory.

Keep risk assessments current, not just compliant

Risk classification is not a one-time exercise. A risk tier that was accurate at deployment may be wrong six months later. A sensible review cadence: comprehensive annual reviews for all systems, triggered reviews when a system is significantly updated or when an incident occurs, and continuous automated monitoring running in the background to catch model drift and policy deviations between formal reviews.

The organizations that govern AI risk well are not the ones with the most sophisticated frameworks on paper. They are the ones who treat risk assessment as a continuous operating discipline rather than a periodic audit exercise.

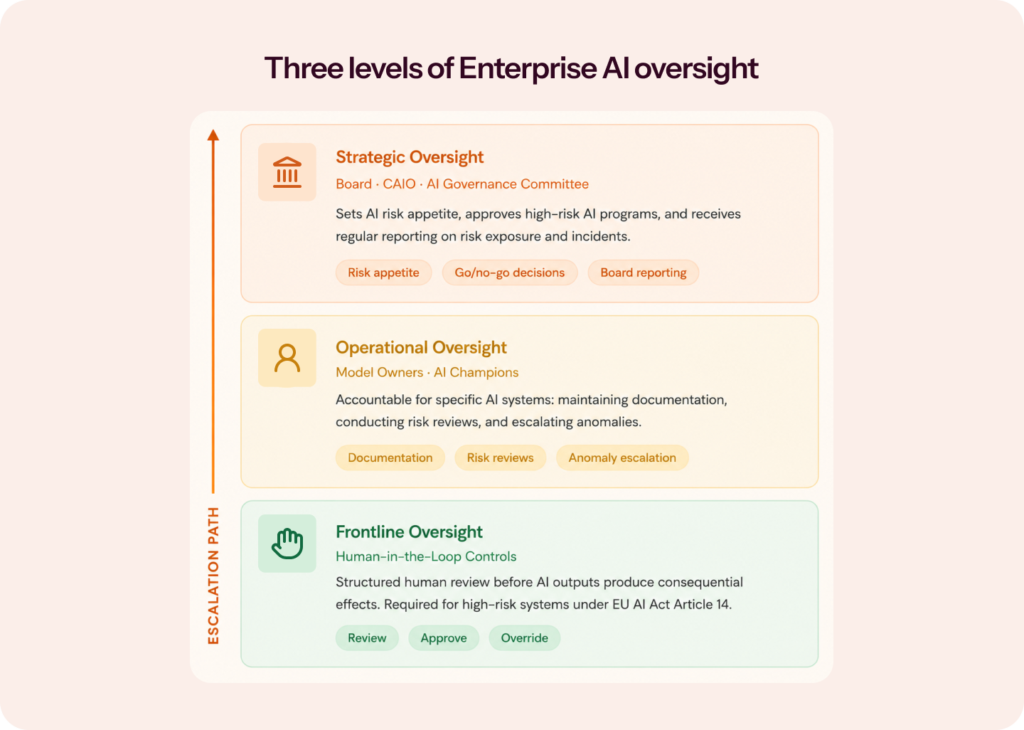

Enterprise AI oversight: Who is watching, and what can they do?

Oversight is the mechanism through which governance gets exercised in practice. It answers a simple but critical question: who is watching the AI, and what authority do they have to intervene?

Effective enterprise AI oversight operates at three levels:

1. Strategic oversight

It sits at the board and C-suite level. The board receives regular reporting on AI risk exposure, regulatory compliance status, and material AI incidents. The AI Governance Committee or Chief AI Officer sets the overall governance direction, approves the AI risk appetite, and makes go/no-go decisions on high-risk AI programs.

As of 2025, roughly 26% of organizations globally now have a dedicated Chief AI Officer, up from 11% just two years prior. The committee should be cross-functional: AI Governance requires sustained input from Security, Risk, Legal, Compliance, Technology, and key business units.

2. Operational oversight

This level is exercised by Model Owners: individuals or teams accountable for the performance, compliance status, and ongoing risk management of specific AI systems. Model Owners maintain system documentation, conduct risk reviews, escalate anomalies, and ensure human review requirements are met for their systems.

| 💡Who is a Model Owner? A Model Owner is the individual or team inside your organization who is formally accountable for a specific AI system. They are not necessarily the people who built it. They are the ones responsible for keeping it compliant, monitored, and documented after it goes live. |

3. Frontline oversight

This level operates through human-in-the-loop controls embedded in AI workflows. For high-risk systems, this means structured human review of AI outputs before they produce consequential effects. A human reviewer approves or overrides the AI recommendation before a hiring decision is finalized, a credit application is rejected, or a patient is flagged. This “human-in-command” philosophy is explicit in EU AI Act Article 14 and is increasingly treated as a baseline expectation across governance frameworks.

The enterprise AI Governance maturity model: Where does your organization stand?

Before you can build a governance program, it helps to know where you are starting from. Most enterprises fall into one of three maturity stages. Use the AI maturity calculator to find out if your AI Governance program is ready for the vast AI landscape.

Stage 1: At Risk

AI systems are being used, but there is no centralized inventory, policies are unclear or unwritten, and oversight is inconsistent. Shadow AI is widespread. Governance happens reactively, usually in response to an incident or an auditor’s question.

Stage 2: Developing

The organization has begun formalizing policies, established some accountability structures (an AI Committee or a designated governance lead), and is building an AI inventory. Risk assessments exist, but may not be consistently applied. Monitoring is partial.

Stage 3: Mature

Governance is embedded in business operations. The AI inventory is comprehensive and continuously updated. Risk classification is applied consistently. Human-in-the-loop controls are operational for high-risk systems. Monitoring is continuous. Governance feeds directly into regulatory reporting and board-level risk discussions.

Most enterprises reading this blog are transitioning from Stage 1 to Stage 2. The goal of this blog is to help you move faster, more deliberately, into Stage 3.

The most important AI Governance frameworks you need to know

You do not have to build your governance framework from scratch. Three global frameworks give you a head start, and understanding them helps you decide which to prioritize.

- EU AI Act. The EU AI Act is the world’s first comprehensive AI regulation with legal force. It establishes a risk-based classification system (prohibited, high-risk, limited-risk, minimal-risk) and imposes specific obligations on organizations deploying high-risk AI systems, including conformity assessments, technical documentation, human oversight mechanisms, and post-market monitoring. If you operate in EU markets or process data of EU citizens, the EU AI Act is mandatory.

- NIST AI Risk Management Framework (AI RMF): Developed by the US National Institute of Standards and Technology, the NIST AI RMF is the most widely adopted AI Governance framework globally. It is voluntary, flexible, and sector-agnostic. It organizes AI risk management into four functions: Govern, Map, Measure, and Manage. If you are a US-based enterprise or working toward US federal contracts, the NIST AI RMF is your starting point.

- ISO/IEC 42001. Published in December 2023, ISO/IEC 42001 is the first international standard for an AI Management System (AIMS). It is certifiable, meaning you can demonstrate to auditors, regulators, and customers that your AI Governance meets an internationally recognized standard. It covers accountability, data privacy, human oversight, incident response, and AI system lifecycle management. ISO 42001 integrates naturally with ISO 27001, making it an efficient addition if you already have an information security management system in place.

Most enterprises benefit from using all three in combination. NIST AI RMF provides the risk management foundation. ISO 42001 provides a structured, certifiable management system. The EU AI Act provides the legal compliance layer. There is substantial overlap between them, and mapping your controls across all three is far more efficient than treating them separately.

How to build an enterprise AI Governance framework: A step-by-step approach

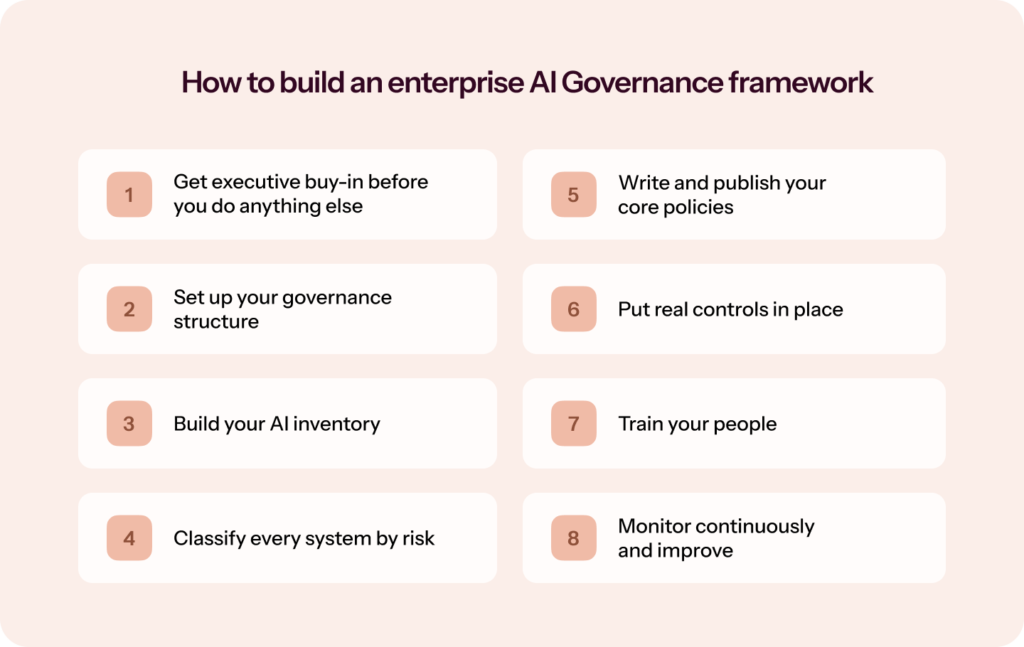

Building AI Governance is not a project you finish. It is a program you run. The eight steps below are designed to get you from wherever you are today to a governance structure that actually holds up at scale.

Step 1: Get executive buy-in before you do anything else.

Governance without authority is just documentation. Before you write a single policy, secure sponsorship from your CEO, CTO, or Chief Risk Officer. Without it, you will not get cross-functional cooperation or the budget to make this a reality.

Step 2: Set up your governance structure.

Form an AI Governance Committee with people from Security, Risk, Legal, Compliance, Technology, and your key business units. Assign a Governance Lead who owns the program day-to-day. Appoint Model Owners for each AI system and AI Champions inside each business unit. Write a one-page governance charter that spells out who has authority over what and how decisions get escalated. Keep it simple enough that people actually read it.

Step 3: Build your AI inventory.

You cannot govern what you cannot see. Audit every AI system across the organization: first-party models, third-party AI-enabled SaaS tools, and anything employees are using informally. For each system, capture who owns it, what data it touches, what decisions it influences, and a rough risk classification. This step takes the most effort. It is also the one that makes everything else possible.

Step 4: Classify every system by risk.

Take your inventory and run each system through your risk classification framework. High-risk systems need immediate governance attention. Lower-risk systems can sit on a standard review cadence. The goal is proportionate effort, not uniform overhead.

Step 5: Write and publish your core policies.

You need five to start: an Acceptable Use Policy, Data Handling Standards, a Third-Party AI Procurement Policy, a Model Documentation Policy, and an AI Incident Response Plan. Get them approved, make them findable, and communicate them clearly. A policy no one knows about is the same as no policy.

Step 6: Put real controls in place.

Policies on paper need to translate into operational reality. That means data handling controls embedded in AI workflows, human review requirements for high-risk systems, access controls on inputs and outputs, bias testing schedules, and monitoring infrastructure for model performance. This is where governance becomes operational.

Step 7: Train your people.

Role-differentiated training matters here. All employees need basic AI awareness and acceptable use. Anyone regularly using AI tools needs deeper training on data handling. Model Owners and AI Champions need the full picture: risk assessment, oversight responsibilities, and how to escalate. Governance fails when people do not understand why the rules exist.

Step 8: Monitor continuously and improve.

Set your cadence: automated monitoring running constantly, quarterly governance check-ins, and annual comprehensive AI risk reviews. Build feedback loops between incident reports, policy updates, and risk classifications. When something breaks, update the framework. Governance that does not adapt to what it learns is just a compliance checkbox.

Common enterprise AI Governance mistakes (And how to avoid them)

This section is worth including because most governance programs fail not from bad intentions but from predictable, avoidable errors.

- Treating governance as a compliance checkbox: Governance built to satisfy an auditor will satisfy the auditor and nothing else. Real governance is operational: it shapes daily decisions, not just annual audit documentation.

- Starting with policies before the AI inventory: You cannot write effective policies for AI systems you do not know you have. The inventory always comes first.

- Ignoring shadow AI: Shadow AI is not malicious. It is employees trying to be productive. Governance that ignores it is governance that covers only a fraction of your actual risk surface.

- Building governance in a single function: AI Governance that lives only in IT or Legal will fail. Legal sees risk. Technology sees architecture. Business units see opportunity. Effective governance requires genuine cross-functional ownership.

- Confusing periodic compliance with continuous assurance: Annual risk assessments and quarterly reviews matter. But AI systems can drift, fail, or be misused between reviews. Continuous monitoring is what catches problems before they become incidents.

- Under-investing in the AI inventory: The inventory is the foundation of every other governance capability. Organizations that treat it as a spreadsheet exercise quickly discover it cannot keep pace with the pace of AI adoption within their business. Automated discovery and continuous tracking are increasingly necessary.

How Sprinto helps you build enterprise AI Governance at scale

If you have read this far, you understand that enterprise AI Governance is not a single policy document or a one-time audit. It is a continuous operational program. And building it from scratch, while simultaneously managing the AI systems you already have deployed, is genuinely hard.

That is where Sprinto comes in.

Sprinto is the world’s first Autonomous Trust Platform built for compliance, risk, and GRC. And AI Governance is now a core part of what it does.

- Shadow AI detection and live AI registry: Sprinto detects AI tool adoption across your organization, maintains a live registry, classifies risk by data exposure, and maps your entire AI footprint to ISO 42001, NIST AI RMF, and the EU AI Act. You always know what AI is running inside your business, not just what you approved last quarter.

- Continuous risk management: Sprinto AI automatically identifies missing or misaligned evidence, reviews vendor security documentation at scale, and flags any drift between your policies and your actual configuration, before an auditor does.

- Vendor risk management for AI-embedded tools: Your governance perimeter does not stop at your own systems. Sprinto continuously monitors third-party vendors, including SaaS tools with embedded AI, flagging changes in their security posture, data handling practices, and compliance status. If a vendor quietly adds an AI feature that touches your customer data, Sprinto catches it before your risk team does.

- Framework alignment without the manual work: Adding a new compliance framework traditionally takes weeks of mapping. With Sprinto, it takes minutes. When regulations change, Sprinto AI realigns your controls, checks, and policies automatically.

- Always audit-ready: Evidence is collected and validated in the background. Policy changes update controls automatically. Your team stops chasing documentation and starts focusing on governance decisions that actually matter.

Sprinto has helped organizations cut audit costs and effort by up to 50% and reduce compliance time by 60 to 80%.

Ready to see it in action? Book a personalized demo with Sprinto and find out how fast you can get your AI Governance program audit-ready.

Or, if you want to start with the data, download Sprinto’s CISO pulse check report on AI risks to see exactly where enterprises like yours are falling behind on AI Governance, and what the leaders are doing differently.

FAQs

AI Governance requires cross-functional ownership. Organizations with a Chief AI Officer typically assign primary accountability there. Others distribute ownership: the CISO or Chief Risk Officer leads operational governance, the AI Governance Committee provides cross-functional oversight, and Model Owners hold accountability for specific AI systems. What matters most is that accountability is clearly defined and documented, not which specific title holds it.

A foundational enterprise AI policy set should include an Acceptable Use Policy (defining permitted and prohibited AI uses), Data Handling Standards (specifying what data can enter AI systems and how outputs must be handled), a Third-Party AI Procurement Policy (requirements vendors must meet before AI tools are approved), a Model Documentation Policy (documentation requirements across the AI lifecycle), and an AI Incident Response Policy (how AI failures are detected, escalated, and remediated). High-risk use cases require supplementary policies covering human review requirements, bias auditing schedules, and disclosure obligations.

Before approving any third-party AI vendor, request evidence of their AI Governance practices, data-handling terms (including explicit exclusions for training data), and relevant certifications such as SOC 2 or ISO 42001. Build audit rights and incident notification obligations into the contract. If a vendor cannot or will not meet those requirements, that is a risk classification decision, not a negotiation. Some vendors simply do not belong in high-risk use cases.

You do not govern it by banning it. You govern it by creating clear guardrails: an approved tool list, explicit rules on what data cannot enter external AI systems (customer PII, financial projections, legal documents, proprietary code), and mandatory awareness training. The goal is to make the safe path the easy path, not to lock everything down and watch employees find workarounds anyway.

Author

Radhika Sarraf

Radhika Sarraf is a content marketer at Sprinto, where she explores the world of cybersecurity and compliance through storytelling and strategy. With a background in B2B SaaS, she thrives on turning intricate concepts into content that educates, engages, and inspires. When she’s not decoding the nuances of GRC, you’ll likely find her experimenting in the kitchen, planning her next travel adventure, or discovering hidden gems in a new city.Explore more

research & insights curated to help you earn a seat at the table.