AI adoption across U.S. organizations has moved faster than almost any previous technology shift. What began as experimentation has become operational dependency, often without the guardrails that security and compliance teams expect.

The AI Pulse Check Report, based on responses from 103 CISOs and security leaders, highlights key AI Governance trends and offers a timely snapshot of how organizations are managing this shift.

| 👉 Download the CISO Pulse Check Report to benchmark your AI risk mitigation maturity, evaluate your AI Governance stack, and start the internal discussions that will define your AI compliance posture in 2026. |

Encouragingly, most leaders recognize this gap and are actively investing to close it in 2026.

My findings from the AI Pulse check Report point to a clear, consistent story: CISOs are highly aware of AI risks today, but many organizations are not yet fully prepared to mitigate them. The primary constraint is not intent or budget; it is the maturity of their AI Governance stack.

In this article, I explain why that gap exists, where it shows up operationally, and how CISOs are responding. Read on!

1. The maturity to treat AI Risks on paper is high

The first takeaway from the report is unambiguous: AI risk is firmly on the CISO agenda. Over the past year, AI has evolved from a future concern to a present-day risk surface that touches data security, compliance, engineering, and business operations simultaneously.

This shift is reflected in real-world outcomes. More than 30% of surveyed organizations experienced a major AI-related security incident in the past 12 months. These incidents were not limited to advanced attacks; they frequently stemmed from everyday behavior, such as employees using unapproved AI tools, exposing sensitive data, or misconfiguring AI APIs.

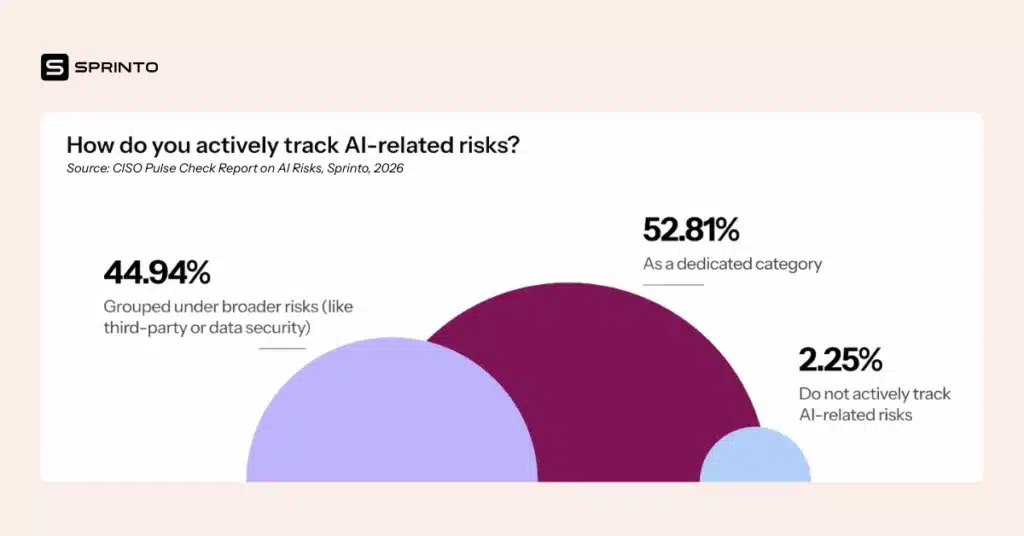

As a result, CISOs are no longer treating AI as an abstract risk. Over half of organizations now track AI-related risks as a dedicated risk category, rather than grouping them under traditional areas like third-party or data security. This marks an important step forward in AI risk maturity: visibility.

However, visibility alone does not equate to control.

2. CISOs are aware of regulations and standards around AI Compliance

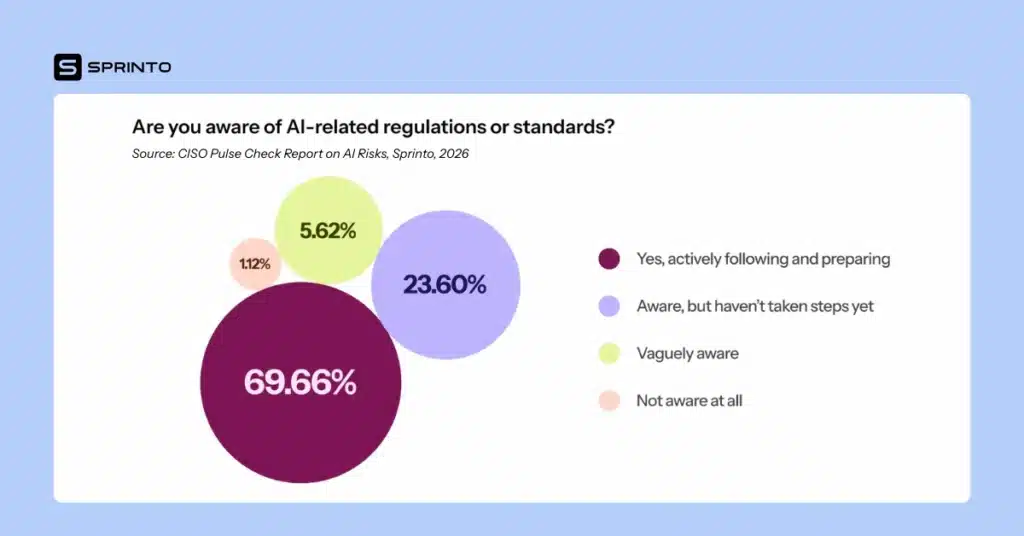

The second AI Governance trend I’ve observed is that regulatory pressure has played a significant role in elevating AI Governance discussions. Nearly 70% of respondents report being aware of AI-related regulations or standards and actively preparing to comply.

This level of awareness is notable. It indicates that CISOs and compliance leaders understand the legal, financial, and reputational risks associated with unmanaged AI usage. AI compliance is no longer viewed as a future obligation, but is now a short-term expectation.

Yet compliance readiness requires more than awareness. It requires demonstrable controls, traceable decisions, and repeatable evidence. Many organizations are discovering that their existing compliance frameworks, which are designed for static systems and periodic audits, are poorly suited to the dynamic nature of AI.

The result is a growing disconnect: organizations know what regulators expect, but struggle to operationalize those expectations within their current AI Governance stack.

3. There is a LARGE enforcement gap that is undermining AI Governance

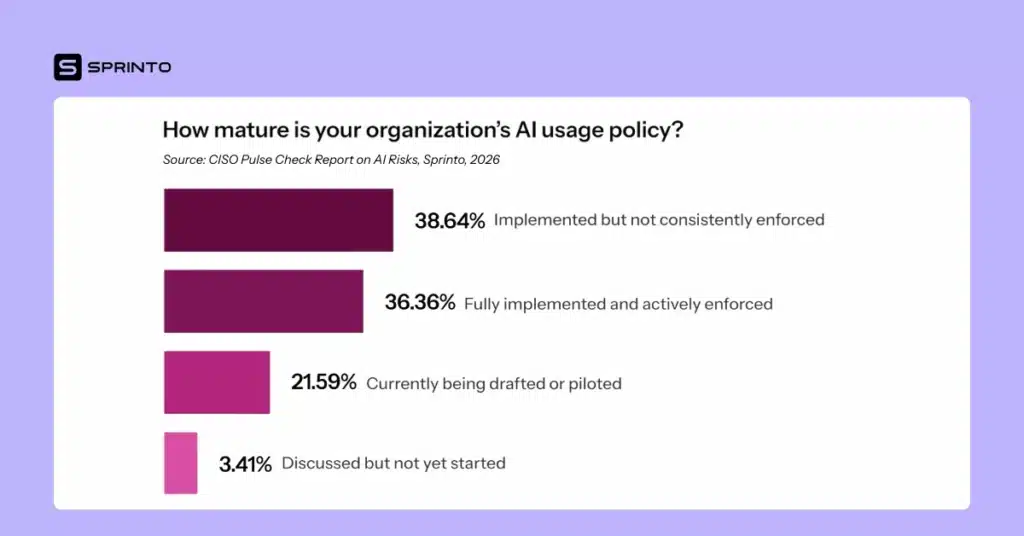

One of the most critical findings in the report relates to policy enforcement. About 40% of organizations have an AI usage policy that is implemented but not consistently enforced.

This gap matters. Inconsistent enforcement creates ambiguity for employees and enables shadow AI usage to flourish. When policies exist without technical or procedural guardrails, employees default to speed and convenience, often at the expense of security and compliance.

From an AI Governance perspective, this represents a maturity plateau. Policies signal intent, but enforcement determines outcomes. Without enforcement, organizations are effectively relying on best intentions in an environment that demands structural controls.

Limited preventive safeguards around data handling compound this issue.

4. Sensitive Data exposure is still largely unaddressed

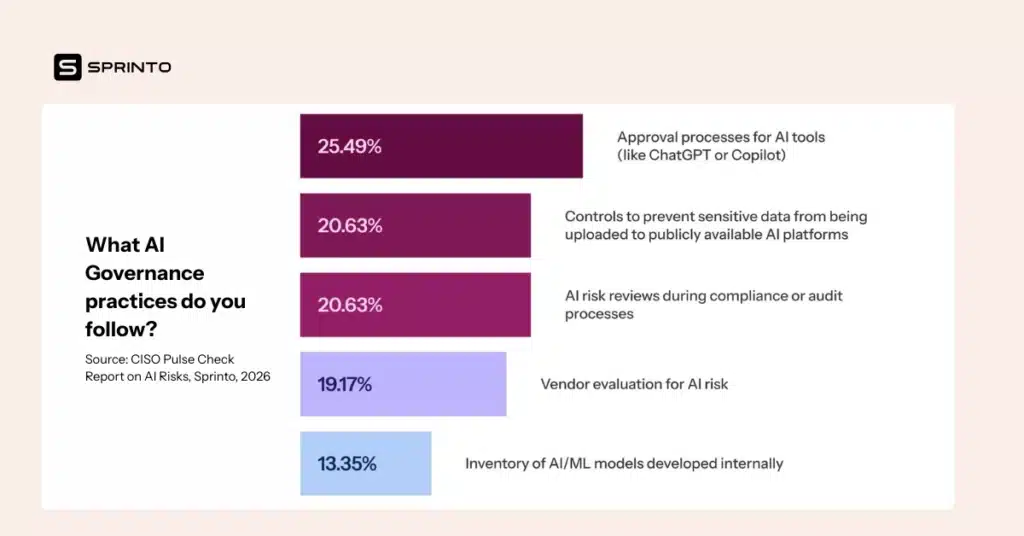

Despite widespread concern about data leakage, only 21% of organizations have controls in place to prevent sensitive information from being uploaded to publicly available AI platforms.

This is one of the most striking and concerning insights from the report. Once sensitive data is shared externally, organizations lose control over how that data is retained, reused, or exposed. From an AI compliance standpoint, this creates immediate regulatory and contractual risk.

The low adoption of preventive controls suggests that many organizations are still relying solely on training and policies to manage this risk. However, AI risk maturity requires moving beyond guidance to enforcement—particularly in high-impact areas like data protection.

5. Manual processes are slowing AI Risk response

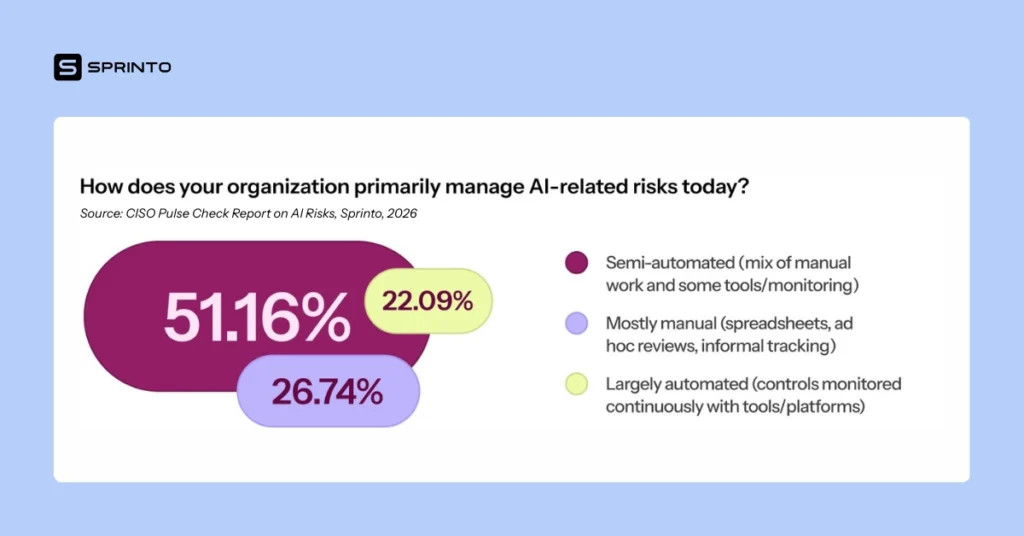

Another AI Governance trend that I’ve observed in U.S. organizations is the reliance on manual governance processes. More than a quarter of organizations manage AI-related risks primarily through manual methods, such as spreadsheets, ad hoc reviews, and informal tracking.

Even among organizations that describe their approach as semi-automated, critical steps like risk assessment, evidence collection, and remediation tracking remain largely manual. This has a direct impact on responsiveness.

Two out of three organizations take longer than a week to implement controls or policy changes after identifying AI-related risks. In a fast-moving AI environment, that delay is significant. New tools, features, and attack vectors emerge faster than manual governance processes can adapt.

This is where the limitations of the current AI Governance stack become most visible.

6. AI Risk feels more complicated than other security domains

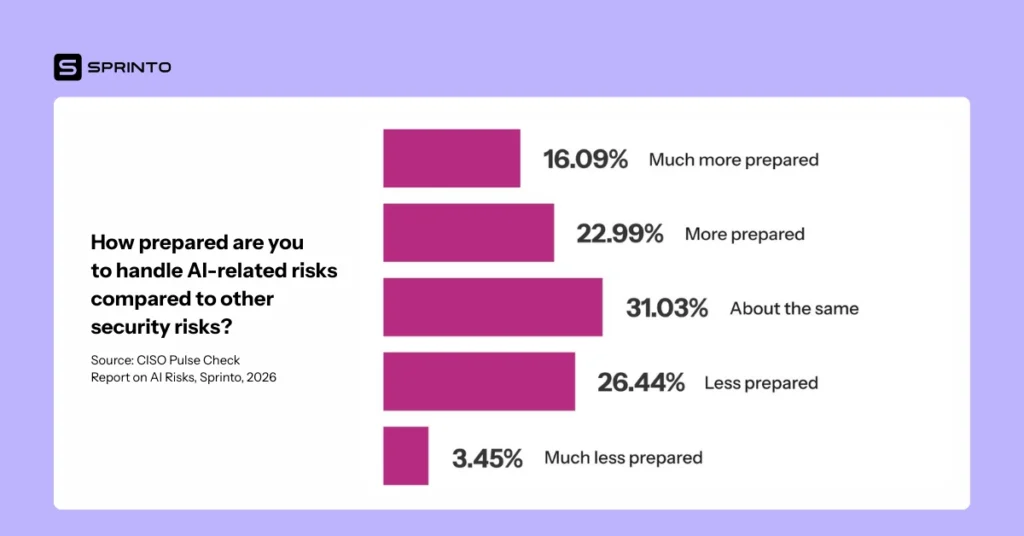

When asked to compare AI risk readiness to other areas of security, nearly 30% of respondents said they feel less prepared to handle AI-related risks.

This lack of confidence is telling. AI spans multiple risk domains, such as data security, third-party risk, software development, and decision governance. Yet most governance stacks remain organized in silos. The absence of a unified system of record for AI Governance makes it difficult to maintain situational awareness.

As a result, CISOs often know risks exist but lack the tooling to assess, mitigate, and consistently evidence those risks.

7. AI Risk maturity remains uneven across U.S. organizations

The report’s AI risk maturity assessment reinforces this picture. Only 25% of organizations rate their AI Governance maturity as advanced, characterized by comprehensive programs, cross-functional ownership, and regular reviews.

Most organizations’ AI risk maturity falls under “developing” or “early-stage” categories, where policies exist, and some controls are in place, but enforcement, automation, and visibility remain limited.

This uneven maturity creates exposure. Organizations in the middle are often the most vulnerable: mature enough to move fast with AI adoption, but not mature enough to govern it at scale.

8. 2026 budgets signal a strategic shift

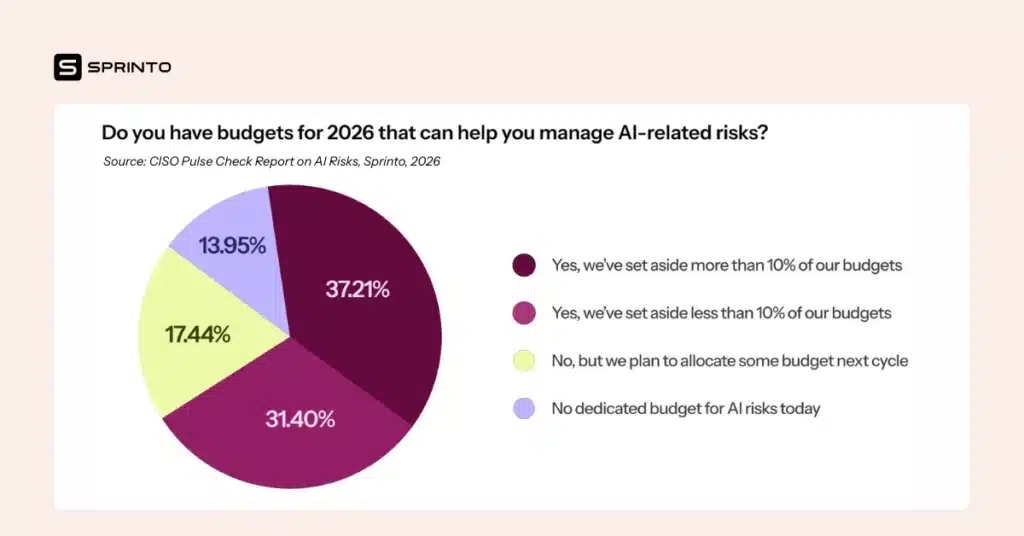

Despite these gaps, the report points to a meaningful turning point. Nearly 70% of organizations have already allocated budget for AI risk mitigation in 2026, with additional organizations planning to do so in the next cycle.

This investment focus reflects a growing realization among CISOs: AI risk cannot be managed with incremental tweaks to legacy governance models. It requires structural change.

CISOs are prioritizing technical controls, recurring AI risk assessments, employee training, and automation. This is a signal that organizations are preparing to evolve their AI Governance stack rather than patch it.

9. Building an AI Governance Stack is the golden opportunity for CISOs

Across all sections of the report, one conclusion stands out: the primary barrier to effective AI Governance is the governance stack itself.

Disparate tools, fragmented workflows, and manual evidence collection slow response times and increase audit fatigue. Without a centralized system of record, teams duplicate work, lose institutional knowledge, and struggle to consistently demonstrate AI compliance.

Conversely, organizations that modernize their AI Governance stack by integrating risk, controls, evidence, and accountability are positioned to move from reactive management to proactive governance.

Conclusion: It’s time to move from awareness to readiness in AI Governance

The most notable AI Governance trend is that U.S. CISOs are no longer catching up to AI risk awareness. They are now confronting the harder challenge of execution.

AI Governance maturity today is limited not by understanding but by infrastructure. Many organizations know what needs to be done, but lack the systems to do it efficiently. Recognizing this, CISOs are investing in 2026 to strengthen their AI Governance stack, automate compliance, and reduce reliance on manual processes.

The organizations that succeed will be those that govern AI better and faster than they can adopt it.

Want to take a deep-dive into the current state of AI Risk management and get the latest AI Governance trends? Download the CISO Pulse Check Report on AI Risks by clicking on the image below👇

Author

Pulkit Jain

Pulkit drives growth through Content at Sprinto. His work has been featured in top publications such as Forbes, The Wall Street Journal, World Economic Forum, e27, and more. His experience as an m-shaped B2B marketer comes fueled with a passion for customer-centricity, affinity for data, and a love for technology, movies, comics, and gaming.Explore more

research & insights curated to help you earn a seat at the table.