Ever since AI became embedded in a lot of platforms, GRC and business functions have defaulted to a simple solution: automate more.

In GRC, this has meant: If evidence collection is slow, automate it. If audits are painful, automate them. If controls are hard to track, automate that too. The underlying belief is that if you remove enough manual effort, GRC will become seamless.

But despite this surge in automation, most GRC teams aren’t actually moving faster in the ways that matter. The work hasn’t disappeared. It’s shifted. You automated drift alerts, but now you’re dealing with alert fatigue. You automated questionnaire responses, but still need to verify that they are complete and accurate. You automated evidence collection, but now still need to validate whether what’s collected is even meaningful. Teams are still chasing context, second-guessing outputs, and spending significant time interpreting signals. And that’s because the core problem in GRC was never a lack of automation. It’s a lack of autonomy.

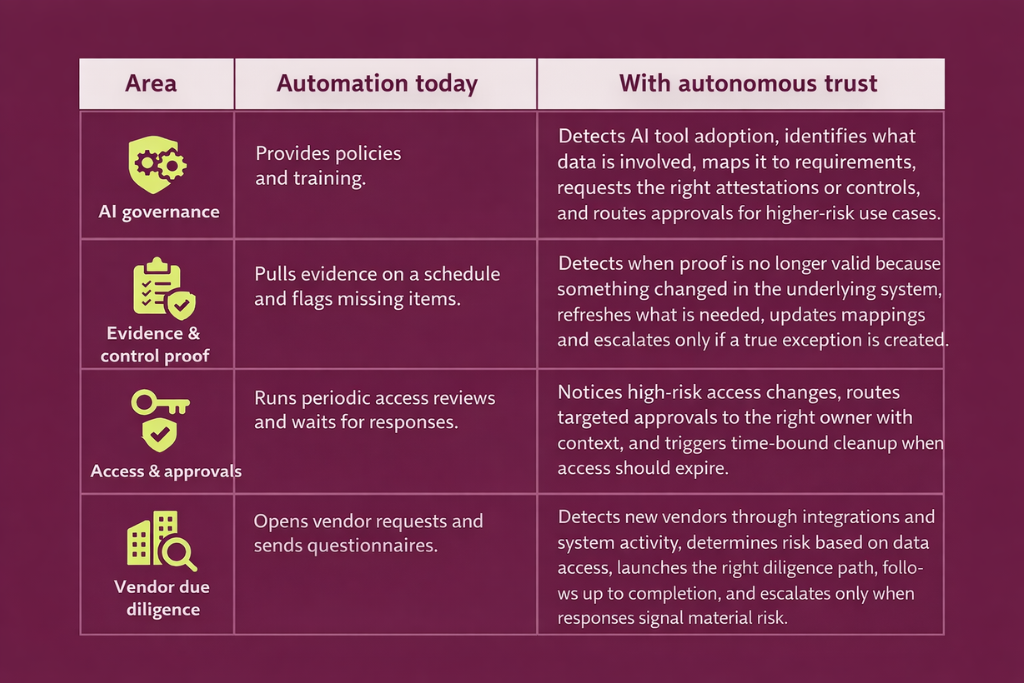

Automation and autonomy are often used interchangeably, but they address distinct problems. Automation solves for execution. It’s about carrying out predefined tasks, such as collecting evidence, triggering alerts, and updating dashboards. It works well when the rules are clear and the outcomes are predictable. Autonomy, on the other hand, is about making decisions within context. It involves interpreting signals, weighing trade-offs, and determining what actually matters in a given situation.

| Automation solves for execution. Autonomy, on the other hand, is about making contextual decisions. |

Why automation falls short vis-à-vis GRC needs

- Automation generates signals. It doesn’t create clarity

A control failing isn’t just a failed check; it’s a signal that needs interpretation. It could be a one-off issue or a sign of a deeper, systemic gap. It might meaningfully increase risk, or be acceptable within the current context. It could require immediate escalation or just careful monitoring over time.

Automation can flag the failure. It can surface the signal instantly and consistently. But the harder part—understanding what that signal actually means for your risk posture—still falls on the team. And that interpretation depends on nuance, business context, and evolving risk appetite. A good example of this is the promise of “continuous monitoring,” where automation can ensure that checks run continuously and that anomalies are detected in real time. But without the ability to interpret those anomalies in context, teams are left dealing with a constant stream of alerts, many of which may not require action. Over time, this leads to alert fatigue and, ironically, slower response to real risks.

- Static systems can’t keep up with dynamic risk

Additionally, many of the signals we discussed in point 1 only appear after the underlying change has already occurred—after access was granted, a tool was adopted, or data started flowing. It doesn’t catch drift in real-time, and in some cases, may lack the context to catch drift at all.

Plus, the deeper issue is that automation operates on static logic, while GRC operates in a dynamic environment. It depends on predefined rules—if X happens, trigger Y—but those rules assume you already know what to look for. Business processes change, systems evolve, and risk landscapes shift. A control that was relevant six months ago may no longer be critical today. A deviation that looks minor in isolation might signal a larger issue when viewed in context.

Static, rule-based automation struggles to keep up with this fluidity, especially when change happens in small, everyday decisions across teams rather than in clearly defined events. It is unable to understand context. Some now-important changes may not trigger alerts at all because they were never part of the original predefined check.

- Automation can’t tell if you’re moving in the wrong direction

Automation executes what it’s told. It can run checks, collect evidence, and flag deviations—but it has no sense of whether those activities are aligned with what actually matters.

If your understanding of risk is unclear, automation will amplify that ambiguity. If your priorities are misaligned, automation will help you move faster in the wrong direction. A control might be passing consistently, but no longer be relevant. A deviation might be ignored because it doesn’t trip a predefined rule, even though it signals something deeper.

This is why more automation doesn’t necessarily lead to better outcomes. In many cases, it simply scales existing inefficiencies. In that sense, automation doesn’t just fall short. It can create a false sense of safety, where controls appear to pass while the underlying system itself has already drifted. For example, your access control mechanisms pass control testing because all listed users have been approved. But this approach overlooks access granted outside the system or inherited through a new integration that never showed up for review.

- Automation doesn’t eliminate the scramble for proof

Automations collect evidence continuously. But when someone asks you to prove what happened over a specific period, that evidence rarely tells a complete story on its own.

You still end up stitching together fragments across systems. You end up pulling logs, verifying timestamps, cross-checking approvals, and manually reconstructing the sequence of events. While automation captures activity, it doesn’t ensure that the evidence is contextualized with how the business actually evolved.

This is why stress shows up in moments that matter: a customer asking for answers by Friday, an auditor requesting evidence for the last 90 days, or a regulator questioning how a specific risk was managed. The system has data, but not clarity (circling back to the interpretation gap that we covered in point 1).

How autonomy bridges the gap between automation and GRC needs

What GRC actually needs is not just the ability to execute tasks automatically, but the ability to make informed decisions with minimal, only-where-necessary human intervention.

This is where autonomy (an autonomous trust system in the context of GRC) emerges as a clear solution. An autonomous trust system would benefit GRC teams in 5 ways and stages:

1) An autonomous trust system understands what you are accountable for

Before anything can run on autopilot, the system gets clarity on what you are supposed to meet. Autonomous trust starts by pulling together all your obligations in one place, including frameworks, customer requirements, contracts, internal policies, and new AI-related standards. This becomes the reference point for what “good” looks like, so the system can keep you aligned as requirements change.

2) An autonomous trust system notices change early

It continuously spots the everyday changes that quietly break compliance. New tools being adopted, new vendors appearing, access changes, policy edits, AI apps spreading across teams, and new requirements landing from customers or regulators.

3) An autonomous trust system interprets what the change affects

It translates those changes into impact. It figures out which controls, policies, commitments, vendors, or AI uses are affected, and what proof now needs to be refreshed, rechecked, or updated.

4) An autonomous trust system is capable of deciding the next best step

It does not just create more alerts. It determines what should happen next. It can tell the difference between something harmless, something that needs fresh evidence, and something that requires a real human decision.

5) An autonomous trust system takes action through agents, with guardrails

This is where autonomy becomes real. Agents move work forward end-to-end. They request what is missing, trigger the right workflows, draft updates, follow up with the right owners, and keep things moving until the gap is closed. Routine steps happen automatically when it is safe. When judgment is needed, the decision goes to the right person with clear context, and everything is recorded so you can always explain what changed and why.

Meanwhile, human judgment—while crucial when called upon— is reserved for high-stakes decisions. This shifts human effort away from repetitive interpretation and toward strategic oversight. The goal is to augment the human team’s ability to make better decisions faster.

The table below illustrates the shift:

The bottom line: Autonomy drives more efficiency with less busywork

Thinking through the autonomous lens changes how you think about your GRC stack. Instead of asking how to automate every task, you start asking where decision-making can be improved or accelerated. You focus less on increasing the volume of checks and more on improving the quality of insights. You design systems that reduce cognitive load, not just operational load.

The teams that get this right gain clearer visibility into risk, faster drift response times, and greater confidence in their compliance posture. They spend less time validating outputs and more time acting on meaningful signals.

Is automation dead? Not at all. Automation still plays a critical enabling role. It handles the heavy lifting of data collection, standardization, and execution. But it is only one layer of the solution. It falls short without an autonomy layer.

The future of GRC isn’t about doing more automatically. It’s about making better, faster decisions with greater context.

Author

Raynah

Raynah is a content strategist at Sprinto, where she crafts stories that simplify compliance for modern businesses. Over the past two years, she’s worked across formats and functions to make security and compliance feel a little less complicated and a little more business-aligned.Explore more

research & insights curated to help you earn a seat at the table.